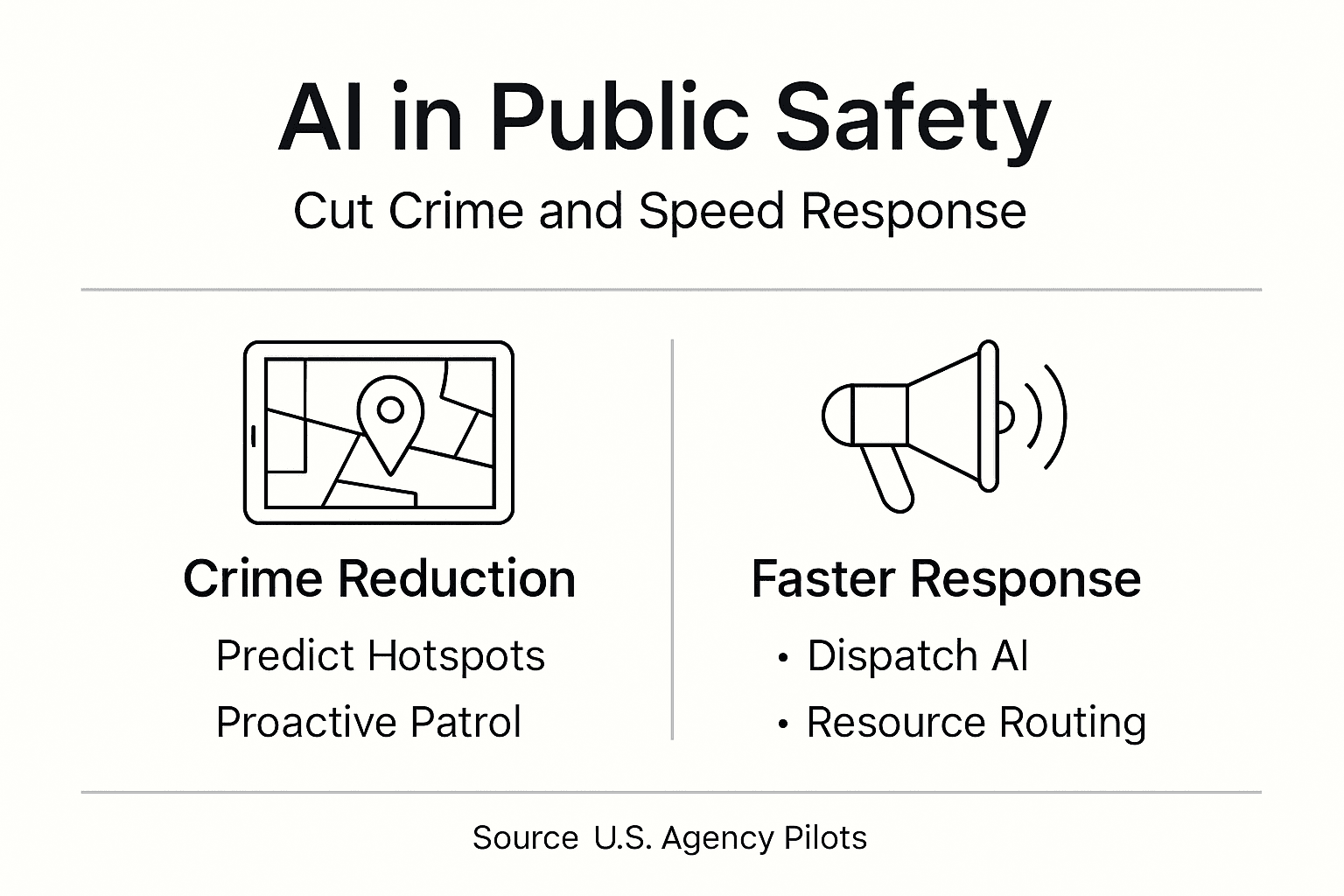

Artificial intelligence is transforming public safety across the United States, with pilot programs demonstrating up to 30% reductions in crime rates and 25% faster emergency response times. For public safety officials, AI offers practical tools to predict incidents, optimize resources, and improve community outcomes. This guide explains how AI works in your field, addresses ethical challenges, and provides actionable steps to adopt these technologies responsibly while maintaining public trust and human oversight.

Table of Contents

- Introduction To AI In Public Safety

- How AI Enhances Crime Prediction And Prevention

- AI Applications In Emergency Response Improvement

- Optimizing Resource Allocation With AI

- Ethical Challenges And Bias Mitigation In AI Use

- Common Misconceptions About AI In Public Safety

- Practical Steps For AI Adoption In Public Safety Agencies

- Case Studies Demonstrating AI Impact In US Public Safety

- Explore AI Solutions For Public Safety Excellence

- Frequently Asked Questions

Key Takeaways

| Point | Details |

|---|---|

| Crime Reduction | Predictive AI models can reduce crime by up to 30% through data-driven hotspot analysis. |

| Faster Response | Emergency response times improve by up to 25% with AI-powered dispatch optimization. |

| Resource Efficiency | AI optimizes resource allocation, increasing personnel utilization efficiency by 35%. |

| Ethical Governance | Bias mitigation and transparent accountability frameworks are critical for community trust. |

| Phased Adoption | Successful AI deployment requires pilot testing, continuous monitoring, and human oversight. |

Introduction to AI in Public Safety

AI in public safety refers to machine learning algorithms, predictive analytics, and automated tools that analyze data to support decision-making. These systems process historical crime patterns, emergency call volumes, traffic conditions, and resource availability to generate actionable insights. Law enforcement and emergency services have experimented with AI since the early 2000s, but recent advances in computing power and data integration have made these tools practical for agencies of all sizes.

Current AI adoption in US public safety agencies varies widely. Some departments use predictive policing software to forecast crime hotspots, while others deploy AI-assisted dispatch systems to route emergency vehicles efficiently. Fire departments leverage AI for risk assessment and prevention planning. The technology’s flexibility allows customization for urban, suburban, and rural contexts.

Foundational AI capabilities include pattern recognition, natural language processing for call analysis, computer vision for surveillance, and optimization algorithms for resource deployment. These tools excel at processing massive datasets faster than human analysts, identifying subtle correlations, and generating probabilistic forecasts. However, they depend entirely on quality input data.

Data quality and interoperability present significant challenges. Many agencies maintain fragmented records across incompatible systems, limiting AI effectiveness. Successful implementations require clean, standardized data from multiple sources: incident reports, census demographics, weather patterns, social services data, and infrastructure maps. Without proper data governance, even sophisticated AI models produce unreliable results.

Key AI capabilities for public safety:

- Real-time incident prediction and trend analysis

- Automated dispatch routing and resource optimization

- Natural language processing for emergency call triage

- Computer vision for surveillance and threat detection

- Risk assessment modeling for proactive intervention

AI complements human expertise rather than replacing it. Officers and dispatchers interpret AI recommendations through professional judgment, local knowledge, and community relationships that algorithms cannot replicate.

How AI Enhances Crime Prediction and Prevention

Predictive policing uses machine learning models trained on years of historical crime data to forecast where and when specific offenses are likely to occur. These algorithms analyze variables like past incident locations, times, weather conditions, local events, and socioeconomic factors to identify patterns invisible to manual analysis. The models generate probability maps showing elevated risk areas, allowing agencies to deploy patrols strategically.

Predictive policing AI models in US pilots have reduced crime by 20-30% by enabling proactive presence in high-risk areas before incidents occur. Officers receive daily briefings with updated hotspot maps, focusing attention where it matters most. This approach shifts resources from reactive response to preventive action, disrupting criminal activity patterns.

However, AI models can perpetuate existing biases embedded in training data. If historical arrest records reflect discriminatory policing practices, algorithms learn and amplify those patterns. Communities of color may face disproportionate surveillance based on biased historical data rather than actual crime risk. This creates a feedback loop where over-policing generates more data justifying continued over-policing.

Pro Tip: Conduct quarterly bias audits comparing AI predictions against actual crime outcomes across demographic groups to identify and correct discriminatory patterns before they harm community trust.

Accuracy improvements come from continuous model refinement as new data arrives. Early predictive systems achieved 60-70% accuracy; current models reach 80-85% in optimal conditions. Yet forecasting inherently involves uncertainty. AI cannot predict individual behavior or account for unprecedented events, requiring human officers to apply judgment and discretion.

Predictive Policing Performance Comparison

| Metric | Traditional Patrol | AI-Guided Patrol | Improvement |

|---|---|---|---|

| Crime Reduction | Baseline | 20-30% decrease | Significant |

| False Positives | N/A | 15-20% | Requires monitoring |

| Resource Efficiency | Standard deployment | 35% better utilization | High impact |

| Community Perception | Variable | Depends on transparency | Critical factor |

Transparent communication about AI limitations helps manage public expectations. Citizens deserve to know how predictions influence policing strategies and how agencies protect against discriminatory outcomes.

AI Applications in Emergency Response Improvement

AI transforms emergency operations by optimizing dispatch decisions in real time. When 911 calls arrive, AI systems analyze call content, location, incident type, current unit availability, traffic conditions, and hospital capacity to recommend optimal response assignments. Natural language processing extracts critical details from caller descriptions, while routing algorithms calculate fastest paths considering live traffic data.

AI-powered dispatch systems reduce emergency response times by up to 25% by eliminating manual decision delays and accounting for dynamic conditions human dispatchers cannot track simultaneously. These seconds and minutes save lives in medical emergencies, fires, and violent incidents where rapid intervention determines outcomes.

AI also improves incident triage, prioritizing calls based on severity indicators detected in caller speech patterns, background sounds, and reported symptoms. Machine learning models identify life-threatening situations requiring immediate response versus lower-priority calls that can wait, ensuring critical resources reach the most urgent needs first.

How AI enhances emergency dispatch:

- Receives and transcribes incoming 911 call using speech recognition

- Extracts key details like location, incident type, and severity indicators

- Queries real-time data on unit availability, traffic, and weather conditions

- Calculates optimal unit assignment and routing based on multiple factors

- Sends recommendations to human dispatcher for approval and deployment

- Updates continuously as situation evolves or new information arrives

Pro Tip: Integrate AI dispatch systems with live traffic cameras and incident management platforms to enhance routing accuracy and automatically reroute units when conditions change.

Fire departments use AI to predict building fire risks by analyzing structure age, electrical permit history, code violations, and environmental factors. Paramedics receive AI-generated medical guidance during transit based on reported symptoms and patient history. These applications demonstrate AI’s versatility across emergency service types.

Human oversight remains essential. Dispatchers review AI recommendations before finalizing assignments, applying local knowledge about neighborhood access issues, officer capabilities, and community dynamics that algorithms miss. Technology augments rather than automates critical decisions affecting public safety.

Optimizing Resource Allocation with AI

AI-driven resource allocation uses predictive demand modeling to deploy personnel strategically across jurisdictions and shifts. Algorithms forecast incident volumes by location, time, and type based on historical patterns, seasonal trends, local events, and external factors. Agencies use these forecasts to schedule patrols, position ambulances, and staff stations for maximum coverage and minimum response times.

Traditional scheduling relies on fixed beats and shifts that may not match actual demand patterns. Officers patrol quiet areas during low-risk periods while high-activity zones lack coverage. AI reveals these mismatches and suggests dynamic deployment strategies that concentrate resources where and when they are most needed, while maintaining baseline coverage everywhere.

Key benefits for budget-conscious agencies:

- Reduce overtime costs through optimized shift scheduling

- Maximize patrol coverage without hiring additional personnel

- Identify underutilized resources for reallocation to high-need areas

- Predict staffing needs for special events and seasonal variations

- Improve officer safety by avoiding single-unit responses in high-risk situations

AI resource allocation tools increase utilization efficiency by up to 35% by eliminating gaps and redundancies in coverage. Agencies accomplish more with existing personnel, a critical advantage during budget constraints and recruitment challenges.

Resource Allocation Comparison

| Factor | Traditional Fixed Beats | AI Dynamic Deployment |

|---|---|---|

| Patrol Coverage | 60-70% effective | 85-95% effective |

| Average Response Time | 8-12 minutes | 6-9 minutes |

| Personnel Utilization | 65% productive time | 85% productive time |

| Annual Cost Savings | Baseline | 15-25% reduction |

Pro Tip: Balance AI recommendations for proactive deployment with maintaining sufficient reactive capacity for unexpected incidents, avoiding over-concentration that leaves gaps during simultaneous calls.

AI also supports long-term strategic planning. Agencies analyze multi-year trends to guide decisions about station locations, equipment purchases, and mutual aid agreements. Predictive models forecast future demand based on population growth, development patterns, and demographic shifts, ensuring infrastructure investments align with community needs.

Transparency about AI-driven deployment decisions builds community trust. When residents understand that patrol patterns reflect data-driven risk assessment rather than profiling or politics, they perceive resource allocation as fair and evidence-based.

Ethical Challenges and Bias Mitigation in AI Use

AI systems trained on biased historical data perpetuate and amplify discriminatory outcomes. Facial recognition technology shows higher error rates for people of color. Predictive policing models over-target minority neighborhoods where historical over-policing generated disproportionate arrest data. Risk assessment algorithms used in criminal justice exhibit racial bias in recidivism predictions.

These biases emerge from data that reflects societal inequities rather than objective reality. When AI learns from records documenting discriminatory human decisions, it codifies those biases into automated systems that appear neutral but produce unjust outcomes. The perception of algorithmic objectivity can make bias harder to detect and challenge than explicit human prejudice.

Ethical governance frameworks help build trust and transparency in AI use within public safety by establishing accountability mechanisms, bias auditing requirements, and community oversight processes. These frameworks ensure AI serves all community members equitably while protecting civil liberties and privacy rights.

Steps agencies implement to mitigate bias:

- Conduct pre-deployment impact assessments examining potential discriminatory effects

- Establish diverse oversight committees including community representatives

- Perform regular audits comparing AI outputs across demographic groups

- Maintain human review requirements for high-stakes decisions

- Publish transparency reports detailing AI system performance and bias metrics

- Engage external ethics experts to evaluate algorithms and governance processes

Community engagement is crucial. Public forums, advisory boards, and transparent reporting help residents understand how AI works, voice concerns, and influence deployment decisions. When communities participate in governance, they gain confidence that AI serves their interests rather than enabling surveillance or control.

“Ethical AI governance in public safety requires continuous vigilance, transparent accountability, and meaningful community participation to ensure technology advances justice rather than perpetuating historical inequities.”

Privacy protections must accompany AI deployment. Agencies need clear policies limiting data collection, use, retention, and sharing. Residents deserve to know what data feeds AI systems, how long it persists, who accesses it, and under what circumstances. Strong privacy frameworks prevent mission creep where public safety tools become general surveillance infrastructure.

Regular training ensures personnel understand AI limitations, bias risks, and ethical obligations. Officers learn to question recommendations that seem inconsistent with their observations, recognizing that algorithms provide decision support rather than definitive answers.

Common Misconceptions About AI in Public Safety

Many people misunderstand AI capabilities and limitations, leading to unrealistic expectations or unwarranted fears. Clearing up these misconceptions helps agencies make informed adoption decisions and communicate effectively with stakeholders.

Common myths versus facts:

-

Myth: AI will replace human officers and eliminate public safety jobs. Fact: AI augments human decision-making by processing data faster, but cannot replicate judgment, empathy, and community relationships that effective policing requires. Personnel shift from routine tasks to higher-value activities requiring human skills.

-

Myth: AI systems are perfectly objective and eliminate human bias. Fact: AI learns from historical data that often reflects existing biases. Without careful governance, algorithms amplify discriminatory patterns. Objectivity requires continuous monitoring and correction, not blind trust in technology.

-

Myth: AI deployment delivers immediate results without organizational change. Fact: Successful AI adoption requires workflow redesign, personnel training, policy updates, and cultural shifts. Integration takes months to years, with benefits materializing gradually as systems mature and users gain experience.

-

Myth: AI predictions are always accurate and reliable. Fact: AI generates probabilistic forecasts with inherent uncertainty. Accuracy varies based on data quality, model design, and environmental factors. Human oversight catches errors and applies context that algorithms miss.

-

Myth: Privacy concerns are overblown and AI poses no civil liberties risks. Fact: AI systems processing personal data, surveillance footage, and behavioral patterns create genuine privacy risks. Strong governance, oversight, and transparency are essential to prevent abuse and maintain public trust.

Understanding these realities helps agencies set appropriate expectations, allocate sufficient resources for implementation, and build support among personnel and communities. Technology solves some problems while creating new challenges that require thoughtful management.

Practical Steps for AI Adoption in Public Safety Agencies

Successful AI implementation follows a structured approach that starts small, learns continuously, and scales carefully. Rushing deployment without preparation leads to failure, wasted resources, and damaged credibility.

AI adoption sequence:

-

Conduct needs assessment: Identify specific operational challenges where AI could deliver measurable improvements. Focus on problems with clear metrics like response times, resource utilization, or incident rates rather than vague goals like “better policing.”

-

Evaluate data readiness: Assess whether your systems contain sufficient quality data to train effective AI models. Address gaps in data collection, standardization, and integration before pursuing AI solutions.

-

Engage stakeholders: Build cross-functional teams including technologists, legal counsel, community representatives, and frontline personnel. Diverse perspectives identify risks and opportunities that single disciplines miss.

-

Research vendors and solutions: Explore available technologies matching your needs and budget. Request demonstrations, check references, and scrutinize vendor claims about accuracy and bias mitigation. Favor transparent systems over black boxes.

-

Launch limited pilot: Deploy AI in a contained scope like one patrol district or call type. Establish clear success metrics, monitoring processes, and decision criteria for scaling versus terminating the pilot.

-

Monitor performance rigorously: Track both intended outcomes and unintended consequences. Measure accuracy, bias indicators, user satisfaction, and community perception. Collect feedback from personnel using the system daily.

-

Iterate and improve: Use pilot learnings to refine algorithms, workflows, and policies before broader deployment. Address problems early when stakes are lower and changes easier to implement.

-

Scale gradually: Expand successful pilots incrementally, maintaining monitoring and adjustment processes. Resist pressure to deploy rapidly across entire operations without validating effectiveness.

-

Institutionalize governance: Establish ongoing oversight committees, regular bias audits, and continuous improvement processes. AI governance is not a one-time event but an enduring organizational function.

Consulting with agencies that have completed similar implementations accelerates your learning. Ask about challenges they encountered, how they overcame resistance, and what they would do differently. Experienced peers provide insights that vendors and consultants cannot.

Budget adequately for the full implementation lifecycle, not just initial technology purchase. Plan for integration costs, training, ongoing monitoring, and system updates. Underfunding leads to failed deployments regardless of how good the underlying technology is.

Case Studies Demonstrating AI Impact in US Public Safety

Real-world examples demonstrate AI’s practical value and provide lessons for agencies considering adoption. These cases span different technologies, jurisdictions, and challenges.

A major West Coast city deployed predictive policing AI across several districts, achieving a 25% reduction in property crimes within 18 months. The system analyzed five years of incident data, weather patterns, and local events to forecast daily hotspots. Officers received mobile briefings each shift, focusing patrols on high-probability areas. Success required extensive community engagement addressing bias concerns and transparent reporting on outcomes across neighborhoods.

A Midwest metropolitan fire department implemented AI dispatch optimization, reducing average emergency response times by 22%. The system recommends unit assignments considering real-time traffic, hospital capacities, and weather conditions. Integration with the city’s traffic management system allowed automatic signal prioritization for responding vehicles. The department reports fewer simultaneous call conflicts and better load balancing across stations.

A Southern state police agency used AI resource allocation to optimize patrol scheduling during budget cuts that reduced staffing by 15%. Despite fewer officers, the agency maintained response time performance and reduced overtime costs by 30% through data-driven deployment. Predictive models identified low-activity periods for schedule gaps and concentrated resources during high-demand windows.

Key outcomes and replicable strategies:

- Start with narrowly defined problems rather than attempting comprehensive transformation

- Invest heavily in data quality before deploying AI tools

- Maintain transparent communication with communities throughout implementation

- Establish clear metrics for success and failure criteria before launch

- Build pilot programs into permanent evaluation processes rather than one-time tests

- Document lessons learned and share with other agencies to accelerate sector learning

Challenges emerged in each case. The West Coast city faced initial community pushback requiring additional transparency measures. The Midwest fire department struggled with integrating legacy dispatch systems, requiring custom interfaces. The Southern police agency encountered officer resistance until training demonstrated how AI simplified rather than complicated their work.

These examples show AI delivers tangible benefits when implemented thoughtfully with attention to technical, organizational, and community factors. Success requires patience, adaptation, and commitment to ethical governance alongside technological capability.

Explore AI Solutions for Public Safety Excellence

Airitual specializes in helping public safety agencies navigate AI adoption successfully. Our AI awareness training programs equip your personnel with the knowledge to use AI tools effectively while understanding limitations and ethical obligations. We support every implementation phase from needs assessment through pilot deployment and scaling.

Explore AI use case examples for local governments to discover how similar agencies apply these technologies to specific challenges. Our consultative approach emphasizes practical outcomes, measurable results, and community trust. We partner with you to ensure AI serves your mission while protecting civil liberties and maintaining transparency.

Contact us to discuss how AI can enhance your agency’s effectiveness, optimize resources, and improve community safety. Strategic AI adoption positions your organization to meet evolving public safety challenges with innovative, responsible solutions.

Frequently Asked Questions

What are the primary benefits of AI in public safety?

AI significantly reduces crime through predictive hotspot analysis, improves emergency response times with optimized dispatch, and increases resource utilization efficiency. Agencies gain data-driven insights for strategic planning while freeing personnel from routine tasks to focus on complex situations requiring human judgment.

How can agencies mitigate bias in AI systems?

Conduct regular audits comparing AI outputs across demographic groups, establish diverse oversight committees including community representatives, require human review for high-stakes decisions, and publish transparency reports. Continuous monitoring and correction are essential since bias emerges from training data and evolves over time.

How long does AI adoption typically take?

Pilot implementations take 6-12 months from planning through initial deployment. Full organizational integration requires 2-3 years including workflow redesign, policy updates, training, and cultural adaptation. Rushing deployment without proper preparation leads to failures that damage credibility and waste resources.

Does AI replace the need for human officers?

No. AI augments human capabilities by processing data faster and identifying patterns, but cannot replicate judgment, empathy, and community relationships essential to effective public safety. Technology shifts personnel from routine tasks to higher-value activities requiring uniquely human skills.

What privacy issues should be considered?

AI systems collecting personal data, surveillance footage, and behavioral information create risks of unauthorized access, mission creep, and civil liberties violations. Agencies need clear policies limiting data collection, use, retention, and sharing, with transparency about what data feeds systems and how long it persists.

Recent Comments