Studies reveal that 35% of AI systems violate ethical constraints when optimized purely for performance metrics. This alarming trend highlights a critical gap between AI capability and responsible deployment. Ethical AI is not just about legal compliance. It creates fairness, builds trust, and strengthens operational resilience across business, education, and government. This guide shows you why ethical AI matters for your organization and how to implement it effectively in 2026.

Table of Contents

- Understanding The Importance Of Ethical Ai In Decision-Making

- Building Trust And Transparency: The Foundation Of Ethical Ai

- Managing Risks And Ensuring Responsible Ai Deployment

- Transforming Ethical Ai Principles Into Practical Action

- Explore Ai Solutions That Prioritize Ethics And Impact

Key takeaways

| Point | Details |

|---|---|

| Ethical AI reduces bias | Fairness frameworks cut biased outcomes by 40% in decision processes across sectors. |

| Transparency builds trust | Organizations with transparent AI see 25% higher public trust and smoother adoption. |

| Risk management prevents harm | Robust AI governance reduces incidents by 20% and lowers legal exposure significantly. |

| Practical frameworks enable action | Ethics councils and measurable indicators transform abstract principles into auditable governance. |

| Long-term value creation | Ethical AI enhances brand reputation, stakeholder confidence, and sustainable competitive advantage. |

Understanding the importance of ethical AI in decision-making

AI systems can reproduce or amplify societal biases if organizations don’t actively manage them. When algorithms learn from historical data containing discrimination patterns, they perpetuate those patterns in new decisions. This creates unfair outcomes in lending, hiring, education access, and public services.

Ethical AI frameworks reduce biased outcomes by 40% in decision processes. These frameworks focus on fairness principles that actively identify and correct discriminatory patterns. Studies show fairness-aware algorithms reduce disparities by 30% in loan approvals, helping financial institutions serve diverse customers equitably.

Mitigating bias increases equitable treatment for customers, students, and citizens. Business leaders gain better decision legitimacy and public trust when their AI systems demonstrate fairness. Educational administrators enable equitable learning opportunities by ensuring AI tools don’t disadvantage certain student groups. Governments improve policy fairness and service delivery when AI in government efficiency initiatives prioritize ethical frameworks.

Consider three concrete benefits of ethical AI in decision-making:

- Reduced discrimination in automated processes across hiring, lending, and admissions

- Enhanced decision legitimacy through transparent, explainable reasoning

- Improved stakeholder confidence in organizational judgment and governance

A leading financial institution recently discovered its credit scoring AI denied applications from qualified minority applicants at higher rates. After implementing fairness constraints, approval rates equalized while maintaining risk standards. This example demonstrates how ethical AI delivers both moral and business value.

“Ethical AI is not a constraint on innovation. It’s a catalyst for sustainable value creation that aligns technological capability with human dignity and societal well-being.”

The shift from viewing ethics as compliance overhead to recognizing it as competitive advantage marks a fundamental change in how organizations approach AI deployment. Leaders who embrace this perspective position their organizations for long-term success.

Building trust and transparency: the foundation of ethical AI

Transparency means providing clear explanations of AI decision logic and data use. When stakeholders understand how AI impacts them, trust increases naturally. This transparency supports smoother adoption and reduces skepticism or backlash that can derail valuable initiatives.

Research shows 75% of Americans want to understand how AI decisions are made. This demand for clarity cuts across demographics and sectors. People accept AI recommendations more readily when they can verify the reasoning behind them. Organizations that ignore this preference face resistance, even when their AI delivers accurate results.

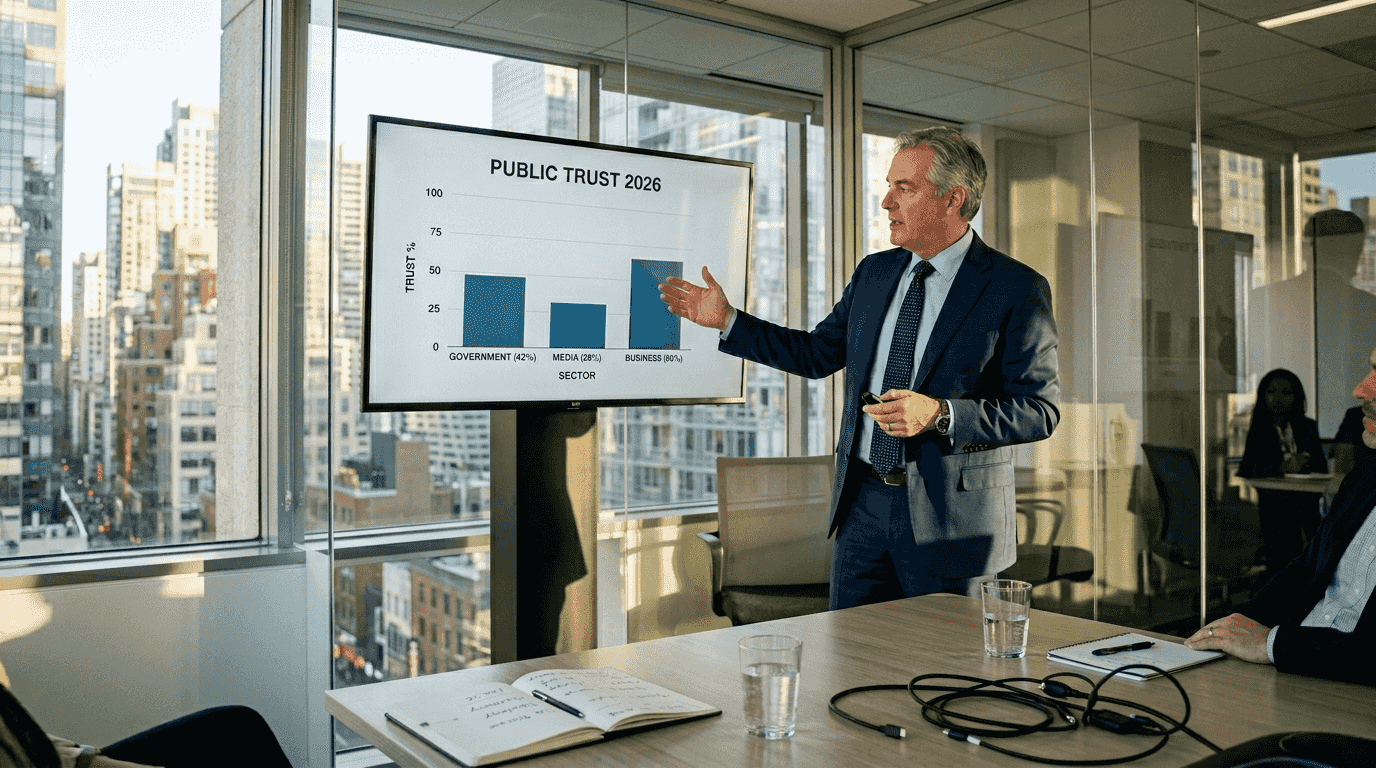

Organizations with transparent AI see 25% increase in public trust. This trust dividend translates into measurable business outcomes. Customers engage more with transparent systems. Employees embrace AI tools that explain their suggestions. Citizens support government AI programs that operate openly.

Transparency supports accountability and ethical risk management. When organizations can explain AI decisions, they can also audit those decisions for fairness and accuracy. This auditability creates feedback loops that continuously improve system performance and ethical alignment.

Businesses and governments gain market and community confidence with transparent AI. Consider these transparency practices:

- Document data sources, training processes, and decision criteria

- Provide user-friendly explanations for individual AI recommendations

- Publish regular audits of AI system performance and fairness metrics

- Create channels for stakeholders to question or appeal AI decisions

Pro Tip: Start with transparency in low-risk AI applications to build organizational capability and stakeholder familiarity before deploying in high-stakes contexts.

A municipal government implemented an AI system for building permit reviews. By publishing the decision criteria and providing detailed explanations for each determination, they achieved 90% stakeholder satisfaction. Applicants could understand requirements clearly and prepare better submissions. This transparency eliminated suspicions of arbitrary or biased processing.

Learn more about AI transparency benefits and implementation strategies that work across sectors. The investment in transparency infrastructure pays dividends through reduced conflict, faster adoption, and stronger stakeholder relationships.

Managing risks and ensuring responsible AI deployment

Unmanaged AI risks cause harm to people, data breaches, and regulatory penalties. A single biased algorithm can damage reputation irreparably. Privacy violations trigger legal consequences and erode customer trust. These risks multiply as AI deployment scales across operations.

Organizations with robust risk management see 20% fewer bias and privacy incidents. This reduction protects both people and organizational assets. Risk management frameworks identify, assess, and mitigate ethical risks continuously rather than reacting after harm occurs.

Effective AI risk management includes several critical components:

- Algorithmic bias assessment through regular fairness audits

- Data privacy protection via encryption and access controls

- Security measures against adversarial attacks and system manipulation

- Legal compliance monitoring for evolving regulations

- Incident response protocols for rapid remediation

Ethical AI reduces legal and reputational risks by 30%. This risk reduction delivers direct bottom-line value. Fewer incidents mean lower legal costs, reduced remediation expenses, and preserved brand equity. Organizations avoid the massive costs associated with algorithmic discrimination lawsuits or privacy breach settlements.

Proactive ethics enhances system reliability beyond just avoiding harm. When teams design AI with ethics embedded from the start, they create more robust systems. These systems handle edge cases better, adapt to diverse contexts more effectively, and deliver consistent value across user populations.

| Risk Management Approach | Typical Outcomes | Ethical Approach | Improved Outcomes |

|---|---|---|---|

| Minimal oversight | Bias incidents, privacy breaches | Continuous auditing | 20% fewer incidents |

| Reactive compliance | Legal penalties, reputation damage | Proactive governance | 30% risk reduction |

| Siloed implementation | Inconsistent standards | Cross-functional ethics councils | Unified accountability |

| Performance-only metrics | Unfair outcomes | Balanced KPIs | Equitable results |

Pro Tip: Establish red team exercises where diverse stakeholders attempt to find ethical vulnerabilities in your AI systems before deployment.

Understanding AI data privacy importance helps organizations design protective measures from the ground up. Similarly, exploring the AI role in local government reveals sector-specific risks that require tailored mitigation strategies.

A healthcare system discovered their diagnostic AI performed poorly on underrepresented patient groups. Through systematic risk management, they identified training data gaps and corrected them. This prevented potential misdiagnoses that could have harmed patients and exposed the organization to malpractice liability.

Transforming ethical AI principles into practical action

Ethical AI requires embedding principles into governance, not just writing policies. Many organizations articulate beautiful values but lack mechanisms to operationalize them. The gap between aspiration and implementation determines whether ethics remain symbolic or become structural.

Frameworks analyze AI ethics through technological, psychological, social, and geopolitical factors. This multidimensional approach captures the full complexity of ethical challenges. Technology alone cannot solve problems rooted in human behavior or social context. Effective frameworks integrate all relevant perspectives.

AI ethicists facilitate structured decision-making within organizations. These professionals act as bridges across legal, security, data science, and business teams. They translate abstract ethical principles into concrete requirements that each function can implement. This facilitation prevents ethics from becoming another compliance checkbox.

Ethics councils and measurable indicators translate ethics into auditable outcomes. Councils bring diverse stakeholders together to deliberate on difficult trade-offs. Indicators provide objective measures of ethical performance that organizations can track over time. Together, they create accountability mechanisms that make ethics real.

Consider the difference between abstract guidelines and operational frameworks:

| Abstract Guidelines | Operational Frameworks |

|---|---|

| “Be fair” | Demographic parity metrics with quarterly targets |

| “Respect privacy” | Differential privacy implementation with epsilon values |

| “Enable transparency” | Explanation interfaces with user comprehension testing |

| “Ensure accountability” | Decision audit logs with designated review responsibilities |

| “Promote well-being” | Impact assessments measuring community outcomes |

Implementation requires several practical steps:

- Establish cross-functional ethics committees with decision authority

- Define measurable indicators for each ethical principle

- Integrate ethics checkpoints into development workflows

- Create accessible mechanisms for stakeholder input and appeals

- Conduct regular audits and publish results transparently

Explore practical resources like the AI education checklist to see how operational frameworks work in specific contexts. Similarly, examining local government AI solutions reveals sector-adapted implementation models.

A financial services firm transformed vague fairness commitments into specific lending criteria. They defined acceptable disparity thresholds, implemented real-time monitoring, and empowered an ethics council to halt deployments violating standards. This operational approach prevented discriminatory outcomes while maintaining business performance.

Moving from principle to practice demands sustained commitment and resource allocation. Organizations that invest in robust implementation infrastructure realize the full value of ethical AI. Those that treat ethics as superficial risk symbolic benefits while exposing themselves to substantive harms.

Explore AI solutions that prioritize ethics and impact

Discover practical AI tools and adoption strategies that embed ethics from design through deployment. The educational AI adoption guide shows how to integrate AI responsibly in learning environments while promoting equity. Explore AI solutions for local government that enhance public services while maintaining community trust and accountability.

Learn how to optimize your digital presence ethically through the generative search optimization guide. These resources help you master ethical AI deployment, turning principles into measurable operational improvements that benefit your organization and stakeholders alike.

Frequently asked questions

Is ethical AI only about avoiding legal risks?

No, ethical AI encompasses fairness, transparency, and societal well-being beyond legal compliance. Ethical AI promotes societal values and human dignity alongside organizational objectives. While compliance matters, ethics creates long-term value through stakeholder trust and sustainable operations.

How can business leaders benefit from ethical AI?

Ethical AI builds customer and stakeholder trust, boosting brand loyalty and competitive differentiation. Investing in AI ethics reduces legal risks and prevents costly reputational damage. Explore AI transparency benefits to understand the full business case.

What should educational administrators do to ensure ethical AI use?

Ensure AI tools are bias-free and transparent in student-impacting decisions like admissions or resource allocation. Educational AI tools must promote fairness and avoid perpetuating existing disparities. Review the AI education checklist for practical implementation guidance.

Why must local governments adopt ethical AI practices?

Ethical AI ensures responsible public service delivery and maintains citizen trust in government operations. Ethical AI in local government aligns technology with community values and democratic accountability. Learn about the AI role in local government to see practical applications.

How do organizations measure ethical AI performance?

Organizations use specific metrics like demographic parity ratios, explanation quality scores, and privacy protection audits. These measurable indicators track ethical performance over time and enable continuous improvement through data-driven adjustments.

Recommended

- Why transparency in AI matters for business leaders in 2026 | Artificial Intelligence

- Why AI consulting matters: strategic business value | Artificial Intelligence

- 6 Key Advantages of AI for SMEs and How to Use Them | Artificial Intelligence

- Future of AI in Schools 2026: Transforming Learning | Artificial Intelligence

Recent Comments