Business leaders face a stark reality: AI-enabled cyber threats rank among the top three organizational risks in 2026. As your organization accelerates AI adoption to gain competitive advantages, you simultaneously expose critical systems to sophisticated attacks that traditional security measures cannot stop. The gap between AI innovation and cybersecurity readiness has never been wider. This guide reveals why prioritizing cybersecurity in your AI strategy is no longer optional but essential for protecting your operations, data, and reputation. You’ll discover actionable insights to transform security from an afterthought into a strategic advantage that enables safer, more confident AI deployment across your enterprise.

Table of Contents

- Understanding The Evolving AI Cybersecurity Risk Landscape

- Why Traditional Cybersecurity Approaches Fall Short Against AI Threats

- Applying A Systems Approach To Secure AI-Driven Enterprises

- Leadership And Organizational Action To Prioritize Cybersecurity In AI

- Protect Your AI Investments With Expert Cybersecurity Guidance

- What Are The Biggest AI-Enabled Cyber Threats Today?

Key takeaways

| Point | Details |

|---|---|

| AI threats dominate attacks | Approximately 60% of recent cyberattacks involve AI-enhanced methods that bypass traditional defenses. |

| Defense adoption lags | Only 7% of organizations have implemented AI-driven security despite 60% likely facing AI-powered attacks. |

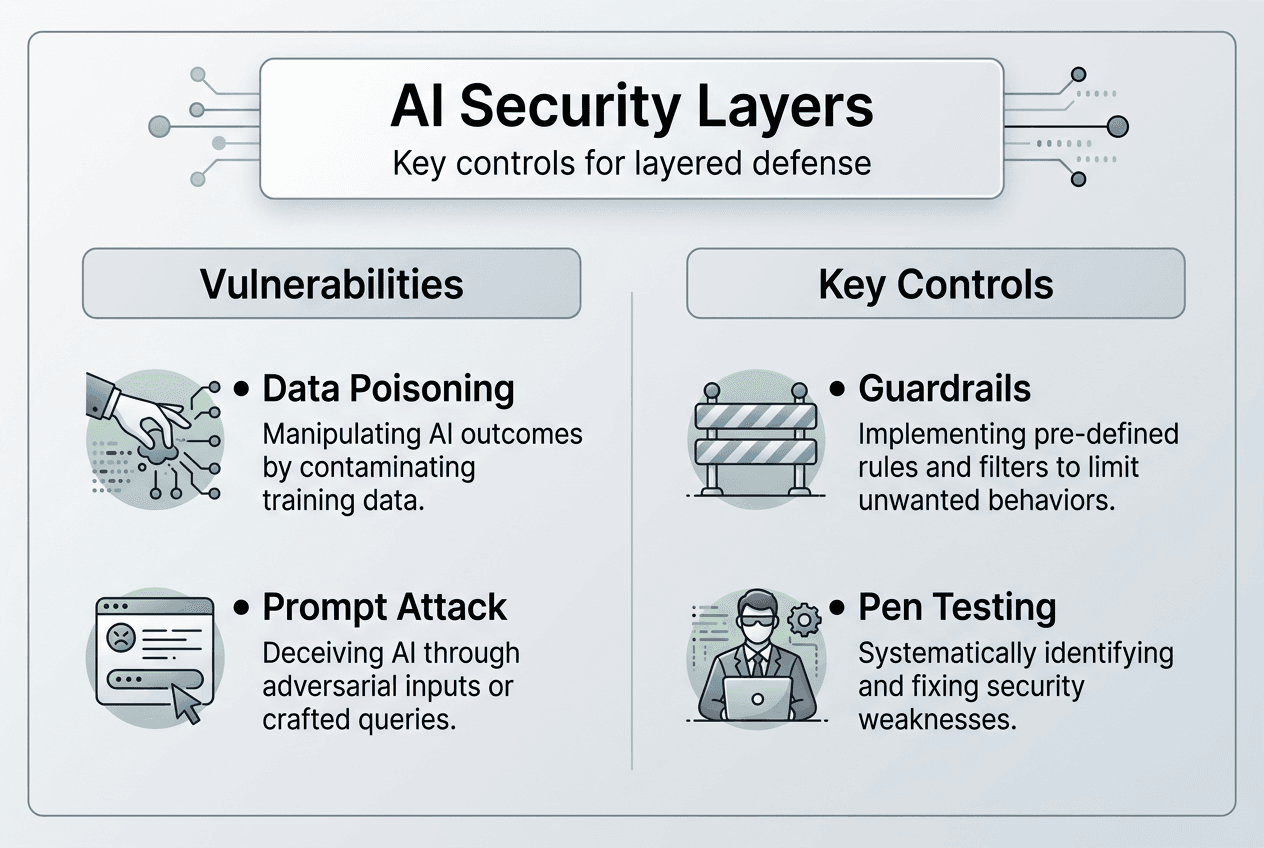

| Systems approach required | Layered defenses with deterministic guardrails at multiple levels significantly raise exploitation difficulty. |

| Leadership drives readiness | CEO and CISO collaboration is critical to bridge the gap between risk awareness and concrete security investments. |

Understanding the evolving AI cybersecurity risk landscape

The cybersecurity landscape has fundamentally shifted. Research reveals that 60% of recent cyberattacks suggest AI involvement, marking a dramatic escalation in both attack sophistication and frequency. Attackers now leverage machine learning to automate vulnerability discovery, craft convincing phishing campaigns, and adapt their tactics in real time based on your defenses. Your security teams face adversaries who operate at machine speed with machine precision.

The awareness problem is not the issue. Business leaders recognize the danger. Yet a troubling gap persists between recognition and action. Studies show that while 60% of organizations likely faced AI-powered attacks, only 7% adopted AI-driven defenses. This disparity reveals a critical vulnerability: knowing about threats does not protect you from them. Your competitors who act decisively gain a security advantage that translates directly into operational resilience and customer trust.

The speed differential compounds the problem. AI accelerates attack capabilities faster than defense improvements, creating an asymmetric battlefield where offense consistently outpaces defense. Attackers experiment freely with offensive AI tools, iterating rapidly without regulatory constraints or ethical considerations. Meanwhile, your security teams work within compliance frameworks, budget limitations, and organizational processes that slow response times. This structural disadvantage demands a fundamental rethinking of how you approach AI security.

Consider these emerging threat vectors that specifically target AI systems:

- Adversarial inputs designed to manipulate model outputs and extract sensitive training data

- Automated reconnaissance tools that map your AI infrastructure faster than manual audits

- Deepfake technologies creating convincing impersonations of executives for social engineering

- Supply chain attacks targeting AI model repositories and training datasets

The data paints an urgent picture. Organizations that delay implementing AI-specific security measures face exponentially growing risks. Every day without proper defenses increases your exposure to attacks that can compromise intellectual property, customer data, and operational integrity. Understanding transparency in AI matters becomes crucial as you evaluate where vulnerabilities exist in your current systems. The AI cybersecurity risk report provides comprehensive analysis of these evolving threats and their business implications.

Why traditional cybersecurity approaches fall short against AI threats

Your existing security infrastructure was built for a different era. Traditional cybersecurity tools operate on assumptions that no longer hold true when defending AI systems. The fundamental architecture of AI models differs dramatically from conventional software, creating vulnerabilities that standard security approaches cannot address. Firewalls, intrusion detection systems, and antivirus software all struggle to protect against threats designed specifically to exploit machine learning systems.

Research demonstrates that traditional AI alignment and model hardening prove insufficient for securing agentic systems. The past five decades of security practices focused on hardening perimeters and validating inputs. These methods assume clear boundaries between trusted and untrusted data. AI models blur these boundaries fundamentally. They process natural language, interpret context, and make decisions based on patterns rather than explicit rules. This creates attack surfaces that traditional tools cannot even recognize, let alone defend.

Prompt injection attacks exemplify this new vulnerability class. Studies confirm that LLMs remain vulnerable to prompt injection attacks bypassing traditional defenses. An attacker can embed malicious instructions within seemingly innocent inputs, causing your AI system to ignore safety guidelines, leak confidential information, or execute unauthorized actions. Web Application Firewalls designed to block SQL injection or cross-site scripting attacks cannot distinguish between legitimate prompts and malicious ones because both appear as normal text inputs.

The challenge extends beyond technical limitations to conceptual ones. Traditional security models rely on deterministic behavior. You define rules, systems follow them, and deviations trigger alerts. AI systems operate probabilistically. They generate outputs based on statistical patterns, making their behavior inherently less predictable. This probabilistic nature means you cannot simply write rules to prevent all unwanted behaviors. Your security strategy must account for uncertainty and adapt to emergent threats that rule-based systems would miss.

Consider these specific failure modes of traditional security tools:

- Signature-based detection misses novel AI-generated malware variants

- Access controls fail when AI models inadvertently memorize and leak training data

- Network segmentation becomes ineffective when AI systems require broad data access

- Static code analysis cannot evaluate the security of learned model parameters

Pro Tip: Regularly simulate AI-specific attack scenarios to uncover hidden vulnerabilities before attackers do. Use red team exercises that specifically target your AI systems with prompt injection, model inversion, and data poisoning attempts. These simulations reveal gaps that traditional penetration testing misses.

Implementing AI penetration testing techniques provides crucial visibility into these unique vulnerabilities. The agentic system security research and prompt injection AI attacks research offer deeper technical analysis of why conventional approaches fail and what new methods show promise. Your security posture must evolve beyond traditional tools to address these fundamentally different threat vectors.

Applying a systems approach to secure AI-driven enterprises

Effective AI security requires abandoning the illusion of perfect defense. No single tool or technique can fully protect complex AI systems. Instead, you need a comprehensive, layered defense strategy that raises the cost and complexity of successful attacks to levels that deter most adversaries. This systems approach treats security as an ongoing process of risk management rather than a one-time implementation project.

Research validates this approach. Studies show that a systems approach with multi-layered defenses raises attacker exploitation burden significantly. Each defensive layer forces attackers to develop additional capabilities, invest more resources, and accept greater risk of detection. While determined adversaries might eventually breach any single layer, the cumulative effect of multiple defenses makes successful attacks exponentially more difficult. This defense-in-depth philosophy has proven effective across cybersecurity domains and applies especially well to AI systems.

Deterministic guardrails at multiple abstraction layers form the foundation of this approach. You implement security controls at the data layer, model layer, application layer, and infrastructure layer. Each layer enforces specific constraints appropriate to its function. Data layer controls prevent poisoning of training sets. Model layer guardrails limit output to safe response categories. Application layer filters validate inputs and outputs against business rules. Infrastructure controls restrict network access and monitor resource usage. Together, these layers create overlapping protection that compensates for individual weaknesses.

The security landscape demands continuous adaptation. Analysis indicates that 2026 cybersecurity risks are driven by AI advances and geopolitical fragmentation. Threat actors constantly evolve their techniques, exploiting new vulnerabilities as they emerge. Your defensive posture must evolve equally quickly. This requires ongoing security evaluation, regular threat modeling, and systematic testing of your defenses against current attack methods. Static security implementations become obsolete within months as attackers discover new exploitation techniques.

Implement these key steps to build a robust systems approach:

- Conduct comprehensive security assessment of all AI systems currently deployed or in development

- Design layered defenses specific to each system’s architecture and risk profile

- Deploy deterministic guardrails at data, model, application, and infrastructure layers

- Develop detailed attacker models based on current threat intelligence and vulnerability research

- Establish continuous security monitoring with automated anomaly detection

- Schedule regular penetration testing focused specifically on AI system vulnerabilities

- Create incident response procedures tailored to AI-specific attack scenarios

- Implement feedback loops that incorporate security findings into development processes

The following table illustrates how different defensive layers address specific threat categories:

| Defense Layer | Primary Threats Addressed | Key Controls |

|---|---|---|

| Data Layer | Training data poisoning, data leakage | Input validation, data provenance tracking, access controls |

| Model Layer | Adversarial inputs, model extraction | Output filtering, confidence thresholds, query rate limiting |

| Application Layer | Prompt injection, unauthorized access | Input sanitization, authentication, authorization checks |

| Infrastructure Layer | Resource exhaustion, lateral movement | Network segmentation, resource quotas, activity monitoring |

Pro Tip: Treat security as a design requirement from the earliest stages of AI system development, not as an afterthought added before deployment. Early integration of security considerations reduces costs, prevents architectural vulnerabilities, and accelerates time to secure production deployment.

Developing expertise in AI cybersecurity training programs equips your teams with skills to implement these layered defenses effectively. Understanding AI data privacy strategies complements technical controls with policy frameworks that protect sensitive information. The agentic systems layered defense research and 2026 cybersecurity risk outlook provide detailed technical guidance for implementing these systems-level security approaches in your organization.

Leadership and organizational action to prioritize cybersecurity in AI

Technology alone cannot solve AI security challenges. The most sophisticated defenses fail without organizational commitment, adequate resources, and executive leadership that treats cybersecurity as a strategic priority rather than a compliance checkbox. Your role as a business leader directly determines whether your organization successfully navigates the AI security landscape or becomes another breach statistic.

The gap between awareness and action represents an organizational failure, not a technical one. Leaders recognize the risks but struggle to translate that recognition into concrete investments and policy changes. Budget constraints, competing priorities, and talent shortages all contribute to this inertia. Yet these challenges affect every organization. The companies that overcome them gain competitive advantages through more secure, reliable AI systems that customers trust and regulators approve.

Collaboration between business and security leadership proves essential. Research emphasizes that CEOs and CISOs must collaborate to ensure cyber readiness in the AI era. CISOs understand technical threats but often lack authority to drive organizational change. CEOs control resources and set strategic direction but may not grasp the technical nuances of AI security risks. This disconnect creates dangerous blind spots where critical vulnerabilities persist because no single leader has both the knowledge and authority to address them.

Your decisions today shape your security posture for years. As one industry report notes, cybersecurity’s future is shaped by leadership decisions today. Investments in security infrastructure, talent development, and organizational processes compound over time. Early action establishes foundations that become increasingly valuable as AI adoption expands. Delayed action creates technical debt and cultural resistance that become progressively harder to overcome.

The organizations that thrive in the AI era will be those whose leaders recognize that cybersecurity is not a cost center to minimize but a strategic capability to maximize. Every security investment today prevents exponentially larger losses tomorrow.

Implement these leadership best practices to prioritize AI cybersecurity effectively:

- Establish direct reporting lines between CISOs and executive leadership to ensure security concerns reach decision makers

- Allocate dedicated budget for AI-specific security tools, training, and personnel separate from general IT security

- Include security requirements in all AI project proposals and evaluate security outcomes in project reviews

- Develop internal AI security expertise through training programs rather than relying solely on external consultants

- Create cross-functional security committees that include representatives from business units deploying AI systems

- Mandate regular security briefings that translate technical risks into business impact terms executives understand

- Tie executive compensation partially to security metrics to align incentives with organizational security posture

- Communicate security expectations clearly throughout the organization to build a culture of security awareness

Talent acquisition and retention require particular attention. The shortage of qualified AI security professionals means you must invest in developing internal expertise. Partner with universities, sponsor certifications, and create career paths that reward security specialization. Organizations that build deep internal security capabilities gain flexibility and responsiveness that those dependent on external resources cannot match.

Leveraging strategic AI consulting value helps bridge knowledge gaps while you develop internal capabilities. Engaging AI penetration testing insights provides objective assessments of your current security posture. The AI cybersecurity leadership report and cybersecurity leadership outlook offer frameworks for building effective leadership structures around AI security.

Protect your AI investments with expert cybersecurity guidance

The complexity of AI security demands specialized expertise that most organizations lack internally. Building this capability from scratch requires years of investment in talent, tools, and experience. You face immediate threats that cannot wait for gradual capability development. Professional AI cybersecurity services bridge this gap, providing immediate protection while you develop long-term internal capabilities.

Comprehensive AI cybersecurity awareness training equips your teams with practical skills to recognize and respond to AI-specific threats. These programs go beyond generic security awareness to address the unique vulnerabilities of machine learning systems. Participants learn to identify prompt injection attempts, validate AI outputs, and implement secure AI development practices.

Specialized AI penetration testing services reveal vulnerabilities before attackers exploit them. These assessments use the same techniques adversaries employ, providing realistic evaluation of your defenses. You receive detailed reports identifying specific weaknesses and actionable remediation guidance prioritized by risk level.

Strategic AI consulting helps you develop comprehensive security strategies aligned with your business objectives. Consultants bring cross-industry experience and technical depth that accelerates your security program maturity. They help you avoid common pitfalls, implement proven best practices, and build sustainable security capabilities that scale with your AI adoption.

What are the biggest AI-enabled cyber threats today?

What are the biggest AI-enabled cyber threats today?

AI-enabled threats include sophisticated prompt injection attacks that manipulate model behavior, deepfake-powered phishing campaigns impersonating executives, automated vulnerability discovery tools that find exploits faster than defenders can patch them, and intelligent data exfiltration malware that adapts to avoid detection. These threats leverage machine learning to operate at scale and speed impossible for human attackers. Traditional security tools struggle to detect these AI-powered attacks because they mimic legitimate user behavior and adapt their tactics in real time based on defensive responses.

How can organizations improve AI cybersecurity readiness?

Organizations improve readiness by implementing comprehensive security approaches that go beyond traditional model hardening to address system-level vulnerabilities. This includes investing in employee training programs focused on AI-specific threats, conducting regular AI penetration testing techniques to identify vulnerabilities, and fostering close collaboration between security teams and business leadership. Effective readiness requires treating security as an ongoing process rather than a one-time implementation, with continuous monitoring, regular threat modeling, and systematic updates to defensive capabilities as new threats emerge.

Why are traditional cybersecurity tools inadequate against AI threats?

Traditional tools assume clear boundaries between trusted and untrusted inputs, but AI models process natural language and contextual information that blurs these boundaries fundamentally. Web Application Firewalls cannot distinguish between legitimate prompts and malicious prompt injection attacks because both appear as normal text. Signature-based detection misses novel AI-generated malware variants that evolve faster than signature databases update. The probabilistic nature of AI systems means their behavior cannot be fully constrained by deterministic rules that traditional security tools enforce. Prompt injection AI attacks research demonstrates how these hybrid attacks exploit fundamental limitations in conventional security architectures.

What role does leadership play in AI cybersecurity?

Leadership determines whether organizations successfully translate risk awareness into concrete security investments and organizational changes. CEOs and CISOs must collaborate closely to ensure technical security requirements receive adequate resources and strategic priority. Leaders establish the security culture, allocate budgets, approve architectural decisions, and set expectations that cascade throughout the organization. Without executive commitment, security initiatives struggle to gain traction against competing business priorities. Effective leaders treat cybersecurity as a strategic capability that enables safer AI adoption rather than viewing it as a compliance cost to minimize.

Recommended

- Why transparency in AI matters for business leaders in 2026 | Artificial Intelligence

- Future of AI in Schools 2026: Transforming Learning | Artificial Intelligence

- 6 Key Advantages of AI for SMEs and How to Use Them | Artificial Intelligence

- How AI boosts efficiency and engagement in small business | Artificial Intelligence

Recent Comments