TL;DR:

- Most organizations fail to establish clear objectives before measuring AI impact.

- Effective measurement focuses on specific KPIs for operational efficiency and customer engagement.

- Continuous feedback and iteration are essential for maximizing AI ROI over time.

AI investments are accelerating across every sector, yet many organizations struggle to clearly quantify what those investments actually deliver. You’ve deployed the tools, trained the teams, and watched the dashboards light up. But when the board asks for hard numbers, confidence wavers. This guide gives you a practical, step-by-step framework to measure AI’s real impact on operational efficiency and customer engagement. From setting objectives to selecting the right tools and communicating ROI, you’ll walk away with a process you can implement immediately and refine over time.

Table of Contents

- Define objectives and set expectations

- Identify key metrics and data sources

- Select the right measurement tools and software

- Analyze results and communicate value

- What most leaders overlook when measuring AI impact

- Advance your AI strategy with expert resources

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Start with clear goals | Defining business objectives up front ensures your AI measurement is aligned and actionable. |

| Choose the right metrics | Track both operational and engagement KPIs to capture AI’s full impact. |

| Use reliable tools | The right analytics platforms make ongoing measurement and reporting efficient. |

| Analyze and adapt | Continuous review and iteration help translate AI data into business value over time. |

Define objectives and set expectations

Every meaningful measurement effort starts before the AI goes live. If you don’t define what success looks like upfront, you’ll spend months chasing data that doesn’t tell you anything useful. Setting clear objectives is the foundation for meaningful measurement, and it’s where most organizations either win or lose the measurement game before it begins.

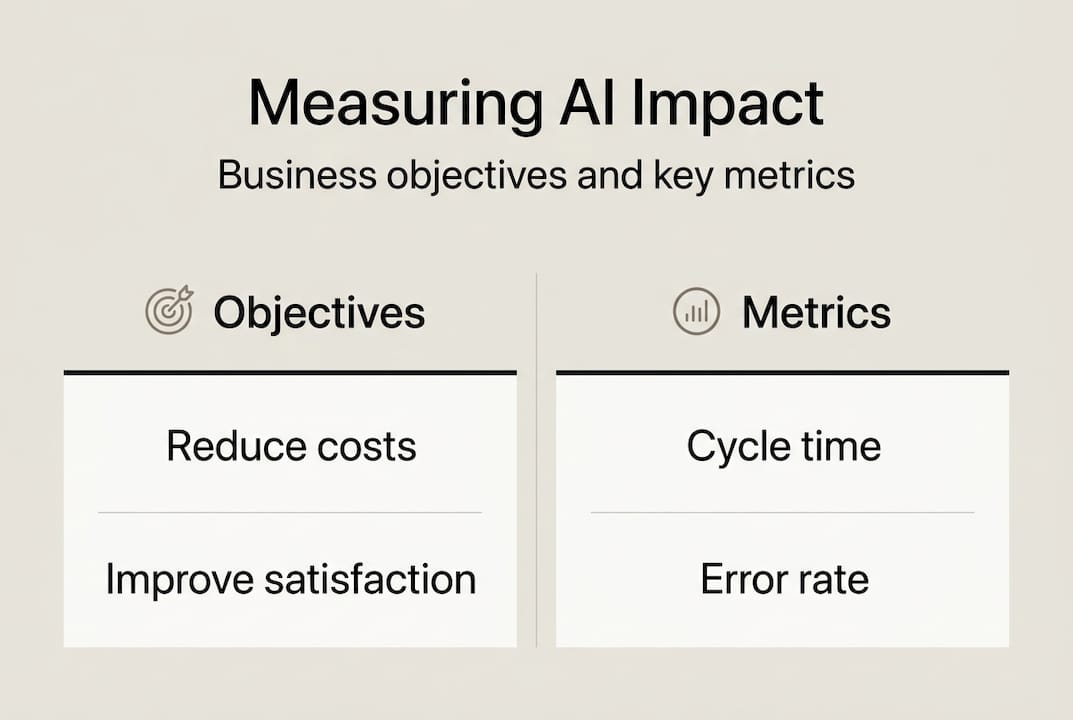

Start by anchoring your AI initiative to a specific business goal. Are you trying to reduce operational costs? Improve customer satisfaction scores? Accelerate a process that currently takes days? Each goal points to a different set of metrics and a different definition of success. Vague goals like “improve efficiency” won’t hold up in a boardroom conversation.

Once you have your goals, translate them into SMART targets: Specific, Measurable, Achievable, Relevant, and Time-bound. For example, instead of “improve customer response times,” commit to “reduce average first-response time from 24 hours to 4 hours within 90 days of AI deployment.” That’s a target you can actually track.

Here’s what strong AI objectives typically include:

- A baseline metric (where you are today)

- A target metric (where you want to be)

- A defined timeframe for achieving the target

- A named owner responsible for tracking progress

- A reporting cadence agreed upon by stakeholders

Engaging stakeholders early is just as important as the metrics themselves. Different leaders care about different outcomes. Operations wants cycle time. Marketing wants engagement rates. Finance wants cost per transaction. Aligning everyone on what will be measured and when it will be reported prevents the classic scenario where AI delivers real value but nobody agrees it counts. Explore our AI strategy guide for a structured approach to building this alignment across your organization.

Pro Tip: Run a 30-minute stakeholder alignment session before finalizing your measurement plan. Ask each leader to name their top two success indicators. You’ll surface conflicts early and build shared ownership of the results.

Identify key metrics and data sources

With clear objectives in place, the next step is selecting metrics that actually reflect progress and making sure you have reliable data to support them. This is where measurement frameworks get practical.

AI impact is often measured through changes in operational efficiency and customer engagement KPIs. The key is choosing metrics that are directly influenced by your AI initiative, not just broadly correlated with business performance.

For operational efficiency, focus on:

- Process cycle time (how long a task takes from start to finish)

- Automation rate (percentage of tasks handled without human intervention)

- Error or defect reduction rate

- Cost per transaction or per output unit

For customer engagement, track:

- Net Promoter Score (NPS)

- Customer Satisfaction Score (CSAT)

- Customer retention and churn rates

- Average resolution time for support interactions

Here’s a simple reference table for mapping metrics to data sources:

| Metric | Data source | Baseline required |

|---|---|---|

| Process cycle time | Workflow or ERP system | Yes |

| Automation rate | AI platform analytics | Yes |

| Error reduction rate | Quality management system | Yes |

| NPS / CSAT | Survey tools (e.g., Qualtrics) | Yes |

| Customer retention | CRM platform | Yes |

| Average resolution time | Help desk software | Yes |

The business impact of AI is only visible when you have a pre-AI baseline to compare against. If you’re mid-deployment and haven’t captured baseline data yet, pause and pull historical records now. Without that reference point, your post-deployment numbers are just numbers. Review our AI best practices and consulting case studies to see how other organizations structured their baseline capture.

Pro Tip: Don’t rely on a single data source for any critical metric. Cross-reference your AI platform’s built-in analytics with your CRM or ERP data. Discrepancies often reveal integration gaps worth fixing early.

Select the right measurement tools and software

Knowing what to measure is only half the challenge. You also need tools that can capture, organize, and surface that data consistently. Platform choice can determine the accuracy and granularity of AI impact reports, and the wrong choice creates reporting gaps that undermine your credibility with stakeholders.

You’ll generally encounter three categories of measurement tools:

- Built-in analytics: Most AI platforms include native dashboards. These are fast to set up but often limited in customization and cross-system integration.

- Third-party BI dashboards: Tools like Tableau, Power BI, or Looker pull data from multiple sources and allow custom reporting. They require more setup but offer far greater flexibility.

- Custom solutions: Built specifically for your organization’s data architecture. Highest cost and longest setup time, but ideal for complex, multi-system environments.

Here’s a comparison to help you evaluate your options:

| Tool type | Setup time | Integration depth | Customization | Best for |

|---|---|---|---|---|

| Built-in analytics | Low | Limited | Low | Early-stage AI pilots |

| Third-party BI tools | Medium | High | High | Mid-to-large organizations |

| Custom solutions | High | Very high | Very high | Enterprise or regulated sectors |

When evaluating any tool, check for these four capabilities:

- Native integration with your existing data systems (CRM, ERP, help desk)

- Automated reporting that reduces manual effort

- Role-based access so each stakeholder sees what’s relevant to them

- Historical data import to support baseline comparisons

According to McKinsey’s CEO guide to generative AI, organizations that invest in proper data infrastructure before scaling AI see significantly better measurement outcomes. Don’t skip the infrastructure step to move faster. It costs more to fix later. Our AI tool checklist and AI marketing tools resources can help you evaluate options specific to your sector and use case.

Analyze results and communicate value

Once your tools are running and data is flowing, the real work begins: making sense of what the numbers actually say and presenting that story clearly to the people who matter.

Demonstrating value requires translating data into actionable business insights. Raw numbers rarely persuade. What moves decision-makers is the story those numbers tell about business outcomes.

Follow this structured process for analyzing and reporting AI results:

- Aggregate your data across all sources into a single reporting view. Fragmented data leads to fragmented conclusions.

- Compare against baselines for each metric. Calculate the percentage change and express it in business terms (e.g., “We reduced resolution time by 38%, saving approximately 1,200 staff hours per quarter”).

- Identify trends over time. A single data point is noise. Three or more data points in the same direction become a signal worth acting on.

- Attribute changes to AI specifically. Rule out external factors like seasonal demand shifts or staffing changes that could explain the results independently.

- Document what didn’t work. Honest reporting builds credibility. If a metric moved in the wrong direction, explain why and what you’re doing about it.

- Present recommendations, not just results. Every report should end with a clear next step.

“The organizations that get the most from AI measurement aren’t the ones with the most data. They’re the ones that connect data to decisions consistently.”

For guidance on verifying AI system integrity alongside performance, our AI penetration testing resources are worth reviewing. And for a deeper look at measuring ROI of AI across different organizational contexts, Forbes Business Council offers a useful external perspective.

Pro Tip: Build a one-page executive summary for each reporting cycle. Busy leaders won’t read a 20-slide deck. A single page with three wins, one challenge, and one recommendation gets read every time.

What most leaders overlook when measuring AI impact

Here’s the uncomfortable truth we’ve observed working across sectors: most organizations treat AI measurement as a project with a finish line. They measure at launch, report the results, and move on. That’s where the real value gets left on the table.

AI systems improve when they’re fed feedback. The measurement framework you build isn’t just a reporting tool. It’s a feedback loop that should actively inform how your AI models are tuned, how your processes are adjusted, and where your next investment goes. The organizations seeing the strongest long-term returns from AI are the ones that use measurement continuously to re-align their initiatives as business conditions change.

Challenges in your data often reveal more than the wins. A metric that’s moving in the wrong direction is pointing at a process gap, a training issue, or a misaligned objective. That’s not failure. That’s intelligence. Build a culture where measurement results drive genuine iteration, not just quarterly slide decks, and your AI investment will compound over time in ways a one-time audit never could.

Advance your AI strategy with expert resources

If you’re ready to move from measuring AI impact to accelerating it, we’ve built a set of resources designed specifically for business leaders navigating this journey. Our AI efficiency best practices guide walks you through proven frameworks for driving measurable results across operations and customer engagement. For step-by-step integration guidance, our AI integration tips resource covers the practical decisions that determine whether your AI initiative delivers or disappoints. And if you want to go deeper with expert support, join our AI webinar series where our consultants share real-world measurement strategies tailored to your sector. Schedule a FREE Strategy Session to get a personalized roadmap for your organization.

Frequently asked questions

What are the top metrics for measuring AI impact?

Common metrics include process cycle time, error reduction rate, customer satisfaction (CSAT), and user engagement scores. Operational efficiency and engagement KPIs are the most reliable indicators of AI’s real business contribution.

How often should AI performance be evaluated?

AI performance should be reviewed monthly or quarterly based on business needs and data volume. Regular reporting cycles ensure meaningful tracking and keep stakeholders aligned on progress and accountability.

Which tools are best for tracking AI effectiveness?

Look for platforms that integrate with your data ecosystem and support customizable reporting. Platform choice directly affects the accuracy and depth of your AI impact reports, so prioritize integration capability over feature count.

What should I do if my results don’t match expectations?

Re-evaluate your objectives, verify data accuracy, and refine both your AI model and your measurement approach. Being ready to iterate your framework when results fall short is a sign of a mature measurement practice, not a failure.

Recommended

- Measuring AI impact: A guide for efficiency and engagement | Artificial Intelligence

- AI Strategy Guide for SMEs: Boost Efficiency Step-by-Step | Artificial Intelligence

- Essential AI integration tips to boost business success | Artificial Intelligence

- How to integrate AI in small businesses for efficiency | Artificial Intelligence

- Why Integrating AI into Your Localization Strategy is a Game-Changer – Gleef

Recent Comments