Customer churn signals appear weeks before cancellation. Student disengagement shows up in attendance patterns long before a failing grade. Yet most organizations only act when a problem has already escalated, not when the data first whispered a warning. Early intervention AI changes that equation entirely. Rather than waiting for a crisis to surface, it scans behavioral patterns, operational signals, and engagement data to flag risks before they grow. This guide breaks down what early intervention AI is, how it works across business and education settings, and how your organization can implement it with confidence.

Table of Contents

- Defining early intervention AI

- How early intervention AI works: core mechanisms

- Benefits and challenges of early intervention AI

- Implementing early intervention AI: practical frameworks

- Enhance your operations with early intervention AI

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Proactive detection | Early intervention AI identifies problems and opportunities before they escalate for better outcomes. |

| Balanced oversight | Combining AI triggers with human judgment ensures responsible decision-making and avoids common pitfalls. |

| Scalable impact | Organizations achieve greater efficiency and engagement when early intervention AI is implemented with clear frameworks. |

| Nuanced challenges | Ethical and privacy concerns require careful attention for successful adoption of early intervention AI. |

Defining early intervention AI

Most people associate AI with automation: scheduling emails, processing invoices, routing support tickets. That framing is accurate but incomplete. Early intervention AI operates at a different level. Its purpose is not to execute routine tasks but to detect subtle warning signs that human teams would likely miss until it is too late.

Early intervention AI refers to AI systems and methodologies that detect potential problems, risks, or opportunities at an early stage, enabling proactive interventions before situations deteriorate. That distinction matters enormously for decision-makers. You are not just automating what already happens. You are creating a system that sees around corners.

This approach applies across several domains:

- Customer service: Detecting frustration signals in chat transcripts before a customer escalates or churns

- Education: Identifying disengagement patterns in student activity data before academic failure occurs

- Healthcare screening: Flagging early clinical indicators for preventive care

- AI model supervision: Monitoring language model outputs for drift or bias before errors compound

Following AI integration best practices is essential when deploying these systems, because early intervention AI requires careful calibration to your specific operational context. Generic setups produce generic results.

Early intervention AI is not a replacement for human judgment. It is a signal amplifier that surfaces what matters, faster than any manual review process could.

For organizations already exploring AI in education, this proactive model represents a significant upgrade from reactive reporting tools.

How early intervention AI works: core mechanisms

With the definition established, we can explore the mechanisms that make early intervention AI actionable across various sectors.

Three core techniques power most early intervention systems:

- Anomaly detection: The AI establishes a baseline of normal behavior, then flags deviations. A student who typically logs in daily but goes silent for three days triggers a review alert.

- Trend analysis: Rather than reacting to a single data point, the system tracks directional movement. A gradual decline in customer satisfaction scores over six weeks is more telling than one bad review.

- Predictive analytics: Using historical patterns, the AI assigns risk scores to current cases. A customer matching the profile of past churners gets flagged for proactive outreach.

Here is how early intervention AI compares to standard automation:

| Feature | Standard automation | Early intervention AI |

|---|---|---|

| Primary function | Execute predefined tasks | Detect emerging risks |

| Trigger | Rule-based (if/then) | Pattern-based (probabilistic) |

| Timing | Reactive | Proactive |

| Output | Completed task | Risk alert or recommendation |

| Human role | Minimal | Essential for review |

| Key risk | Rigidity | False positives, concept drift |

This concept applies variably across customer service, education, healthcare, and AI model supervision, which means your implementation strategy must account for domain-specific data types and intervention workflows. What works in a call center will not map directly onto a school district without significant adaptation.

For organizations in public services, early intervention AI can reduce costly escalations in social services, housing support, and community health programs. The operational savings are real and measurable.

Pro Tip: Monitor for concept drift in your deployed models. As student behavior, customer preferences, or operational conditions shift over time, your AI’s accuracy can quietly degrade. Schedule quarterly model reviews and use AI penetration testing to stress-test your system’s reliability before problems surface in production.

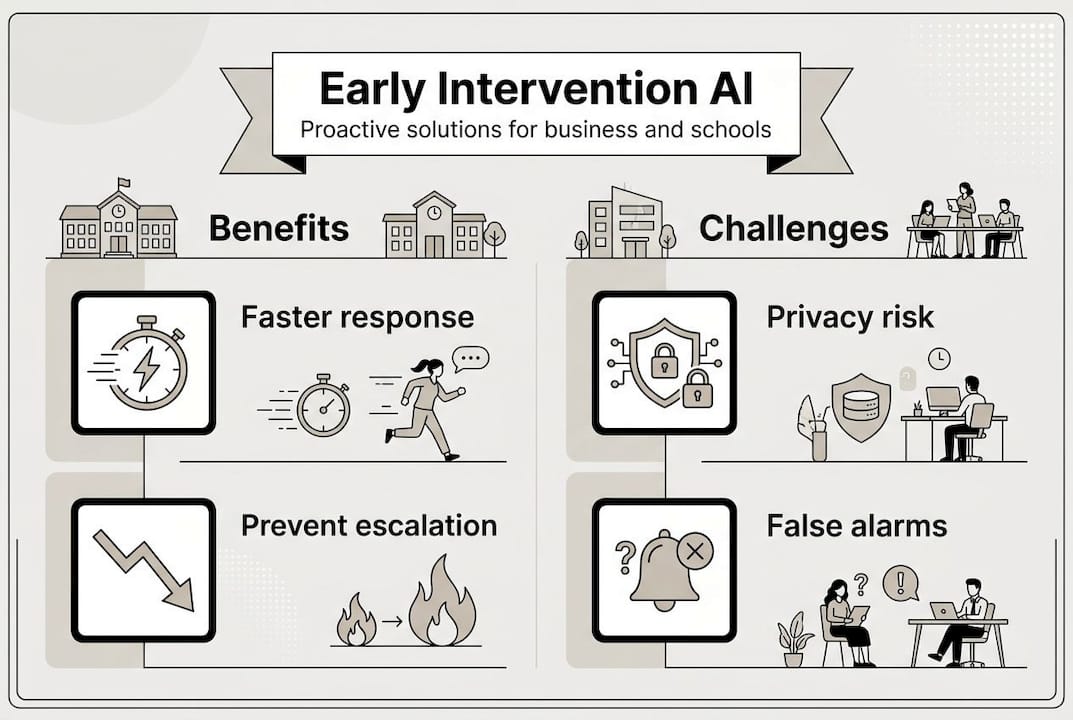

Benefits and challenges of early intervention AI

Understanding the mechanics is critical, but recognizing the reasons to invest and the risks to address solidifies your strategy.

Key benefits:

- Improved outcomes: Intervening early consistently produces better results than reactive responses, whether that means retaining a customer or supporting a struggling student

- Operational efficiency: Automated flagging reduces the manual effort required to monitor large populations of customers or learners

- Scalability: One well-trained model can monitor thousands of cases simultaneously, something no human team can replicate

- Data-driven prioritization: Teams focus their energy where the risk is highest, not where the noise is loudest

Key challenges:

- Privacy concerns: Continuous behavioral monitoring raises legitimate questions about data consent and storage

- Equity risks: Biased training data can cause AI to flag certain demographic groups disproportionately

- Over-reliance: Teams may defer to AI signals without applying critical human judgment

- Implementation complexity: Integrating early intervention AI with existing systems requires technical and organizational readiness

The ethical implications of AI in early intervention settings are real. Optimism about proactive gains must be balanced with caution around over-reliance, privacy, and the need for robust human oversight. Ignoring these concerns does not make them go away. It makes them more expensive to fix later.

| Domain | Primary reward | Primary risk |

|---|---|---|

| Customer service | Reduced churn, faster resolution | Privacy, false positives |

| Education | Early academic support | Equity bias, stigma |

| Healthcare | Preventive care, cost reduction | Data sensitivity, liability |

| AI model supervision | Reduced output errors | Complexity, oversight gaps |

Following AI integration best practices helps organizations navigate these trade-offs systematically rather than reactively. The goal is not to eliminate risk but to manage it with clear protocols.

Organizations investing in AI in education should note that AI now drives a measurable share of proactive issue detection in learning environments, with adoption accelerating sharply since 2024 as institutions recognize the gap between available data and actionable insight.

Implementing early intervention AI: practical frameworks

With a balanced perspective on the benefits and risks, it is time to translate insight into practical implementation steps.

- Clarify your intervention goals. Define what you are trying to detect and why. Vague goals produce vague models. Be specific: reduce student dropout rates by 15%, or cut customer escalation volume by 20%.

- Select your highest-value domain first. Do not try to deploy across every function simultaneously. Pick one area where early signals are already visible but underused.

- Audit your data quality. Early intervention AI is only as good as the data it trains on. Incomplete or biased datasets produce unreliable signals.

- Assemble multi-specialist oversight. Using multi-specialist judges for model supervision improves robustness and reduces the risk of systematic blind spots in your AI’s decision-making.

- Run a structured pilot. Limit scope, set a clear timeline, and define success metrics before you begin. Pilots without defined endpoints tend to drift.

- Monitor for concept drift in production. As conditions change, your model’s accuracy will shift. Build in scheduled reviews from day one.

Pro Tip: Always balance AI signals with human review, especially for high-consequence decisions. An AI flag should open a conversation, not close a case. This is particularly important in careers education settings where an incorrect intervention can affect a student’s long-term trajectory.

Suggested pilot metrics to track:

- Accuracy rate: How often does the AI correctly identify a genuine risk?

- Intervention rate: What percentage of flagged cases receive a human follow-up?

- Escalation prevention: How many flagged cases were resolved before reaching a crisis point?

- Operational efficiency: How much staff time is saved per 100 cases reviewed?

- False positive rate: How often does the AI flag cases that turn out to be non-issues?

Review your AI training insights regularly to keep your models current and your teams informed about what the data is actually telling you.

Enhance your operations with early intervention AI

You now have a clear picture of what early intervention AI is, how it works, and how to implement it responsibly. The next step is connecting that knowledge to tools and strategies built for your specific context. At airitual.com, we work directly with businesses and educational institutions to design AI systems that detect risk early and act on it intelligently. Explore our AI integration best practices guide for operational frameworks you can apply immediately. If education is your focus, our AI in education resources and AI in careers education insights offer targeted strategies for student engagement and early academic support. Schedule a FREE strategy session to discuss how early intervention AI fits your organization’s goals.

Frequently asked questions

What makes early intervention AI different from regular automation?

Early intervention AI detects subtle issues or opportunities and triggers action before escalation, while standard automation simply executes predefined, routine tasks without assessing emerging risk.

What are common pitfalls when implementing early intervention AI?

The most frequent issues include over-reliance on AI signals, inadequate data privacy protocols, and missing nuanced cases that require expert human review. Ethical concerns around privacy and equity must be addressed in your implementation plan from the start.

How do I measure the success of an early intervention AI pilot?

Track accuracy rate, intervention rate, escalation prevention, operational efficiency, and false positive rate as your core pilot metrics. Monitoring for concept drift in production is equally important to ensure sustained accuracy over time.

Should early intervention AI replace human judgment entirely?

No. Early intervention AI is most effective when it works alongside expert human oversight. Balancing AI with human judgment is especially critical for complex or high-stakes decisions where context and nuance matter.

Which domains use early intervention AI most actively?

It is most prevalent in customer service, education, healthcare screening, and AI model supervision, with each domain applying the core detection principles to its own data types and intervention workflows.

Recent Comments