Most business leaders believe AI works best when left alone to make decisions. This assumption drives organizations toward fully autonomous systems that promise efficiency but often deliver inconsistent results and eroded customer trust. The reality is that human-in-the-loop AI integrates human judgment into automated workflows, creating systems that balance speed with reliability. You will learn what human-in-the-loop AI is, how it transforms business operations, and why it matters for your organization’s competitive advantage.

Table of Contents

- Key takeaways

- What is human-in-the-loop AI? Defining the concept and core components

- How human-in-the-loop AI boosts business efficiency and customer engagement

- Design considerations and challenges in implementing human-in-the-loop AI

- Future outlook and strategic steps for business leaders to adopt human-in-the-loop AI

- Explore AI-driven efficiency solutions for your business

- FAQ

Key Takeaways

| Point | Details |

|---|---|

| Definition and value | HITL blends human judgment with AI at critical decision points to improve reliability and trust in automated workflows. |

| Integration patterns | HITL uses synchronous loops, asynchronous loops, and human on the loop configurations to balance speed and oversight. |

| Key components | Task queue managers, verification mechanisms, API integrations, and feedback loops coordinate decisions and improve model performance. |

| Measured impact | Organizations report a 31 percent rise in decision accuracy and substantial cost savings with faster hiring and fewer errors. |

What is human-in-the-loop AI? Defining the concept and core components

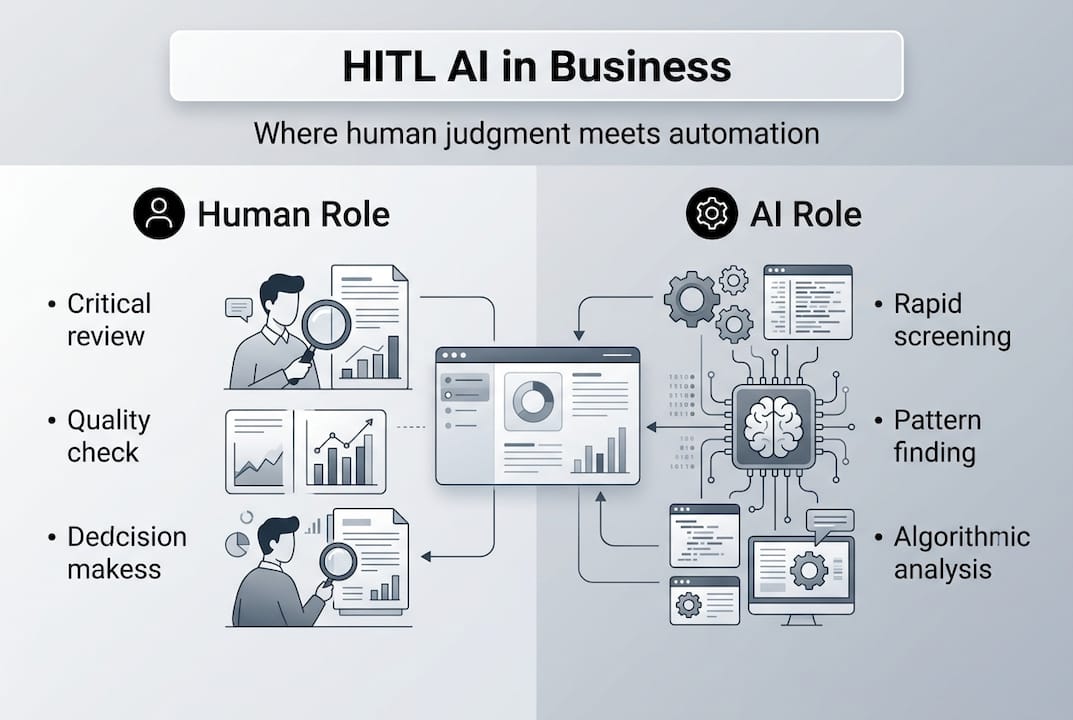

Human-in-the-loop AI represents a hybrid approach where artificial intelligence systems integrate human expertise at critical decision points. Unlike fully autonomous systems that operate independently, HITL architectures recognize that certain tasks require human judgment, contextual understanding, or ethical considerations that machines cannot replicate. This integration happens through structured touchpoints where humans review, correct, or approve AI outputs before they impact business outcomes.

The core value proposition centers on reliability. AI excels at processing massive datasets and identifying patterns, but struggles with edge cases, nuanced judgment calls, and situations requiring empathy or ethical reasoning. By inserting human oversight at strategic moments, you capture the speed and scale of automation while maintaining the quality and trustworthiness that stakeholders demand. This approach proves especially valuable in high-stakes domains like healthcare diagnostics, financial fraud detection, and customer service interactions where errors carry significant consequences.

HITL systems employ three primary integration patterns. Synchronous loops require human input before the AI proceeds, creating real-time checkpoints for critical decisions. Asynchronous loops allow AI to act immediately but route outputs to humans for post-hoc review and correction, enabling continuous learning. Human-on-the-loop configurations position people as monitors who intervene only when the system flags exceptions or uncertainty, optimizing for scale while preserving oversight capability.

Key architectural components include:

- Task queue managers that route decisions to appropriate human reviewers based on complexity, confidence scores, or business rules

- Verification mechanisms that track human feedback and measure agreement rates between AI predictions and human judgments

- API integrations that connect AI engines with human review platforms, enabling seamless handoffs and data flow

- Feedback loops that channel human corrections back into training pipelines, improving model performance over time

The distinction between HITL and autonomous AI lies in accountability and adaptability. Autonomous systems make independent decisions, placing full responsibility on the algorithm and its training data. HITL systems distribute accountability, allowing humans to catch errors, apply contextual knowledge, and guide the AI toward better performance. This shared responsibility model reduces risk while accelerating the path to production for AI applications that might otherwise require years of additional training data to achieve acceptable accuracy.

How human-in-the-loop AI boosts business efficiency and customer engagement

Businesses implementing HITL architectures report substantial operational improvements backed by measurable data. Decision accuracy increases by 31% when human judgment supplements machine predictions, particularly in classification tasks where edge cases and ambiguous inputs challenge pure automation. This accuracy gain translates directly to reduced rework, fewer customer complaints, and lower costs associated with fixing errors downstream.

The financial impact extends beyond accuracy improvements. Organizations using HITL AI for hiring reduce time-to-hire by 75% by automating initial resume screening while routing borderline candidates to human recruiters for nuanced evaluation. Logistics companies implementing HITL route optimization achieve 50% cost reductions by combining algorithmic efficiency with human judgment about real-world constraints like weather, driver preferences, and customer relationships that algorithms miss.

False positive reduction represents another critical benefit. Security and fraud detection systems suffer when autonomous AI generates excessive alerts that overwhelm human analysts. HITL approaches cut false positives by 67% by having analysts review and label uncertain cases, creating training data that teaches the model to distinguish genuine threats from benign anomalies. This improvement preserves analyst capacity for investigating real incidents rather than chasing false alarms.

Customer engagement quality improves when AI-driven systems maintain human touchpoints for complex or emotionally charged interactions. Chatbots handle routine inquiries autonomously but escalate frustrated customers or unusual requests to human agents who bring empathy and creative problem solving. This tiered approach delivers fast service for simple needs while ensuring meaningful human connection when it matters most, building trust that pure automation cannot achieve.

The productivity paradox dissolves under HITL frameworks. Rather than replacing humans, these systems redirect human effort toward high-value activities. Content moderation teams shift from reviewing every post to focusing on nuanced cases flagged by AI. Financial analysts move from data entry to strategic interpretation of AI-generated insights. Customer service representatives handle complex relationship building instead of answering the same basic questions repeatedly.

Key efficiency drivers include:

- Reduced processing time for routine decisions while maintaining quality standards

- Lower training costs as AI handles basic tasks and humans focus on edge cases requiring expertise

- Improved compliance through documented human oversight of automated decisions

- Faster iteration cycles as human feedback continuously refines AI performance

Industry-specific applications demonstrate versatility. Fintech companies use HITL for loan approvals, letting algorithms assess straightforward applications while routing complex financial situations to underwriters. Healthcare providers employ HITL diagnostic tools where AI flags potential conditions and radiologists confirm findings, combining machine pattern recognition with clinical judgment. E-commerce platforms leverage HITL for product categorization, using AI for bulk classification and human reviewers for ambiguous or new product types.

Design considerations and challenges in implementing human-in-the-loop AI

Successful HITL implementation requires careful system architecture that addresses both technical and human factors. Strategic task routing forms the foundation. You must define clear criteria for which decisions require human input based on confidence thresholds, business impact, and regulatory requirements. A loan application system might route requests under $10,000 with high confidence scores directly to approval while flagging larger amounts or borderline credit profiles for human review.

Monitoring mechanisms prevent quality degradation over time. Track agreement rates between AI predictions and human decisions to identify model drift or systematic biases. Measure review times to detect reviewer fatigue or insufficient training. Implement spot checks where multiple reviewers assess the same cases to calibrate consistency and identify outliers who may need additional guidance or are introducing their own biases into the system.

Automation bias poses a significant risk where humans defer too readily to AI recommendations rather than applying independent judgment. When reviewers see an AI confidence score of 95%, they often rubber-stamp the decision without critical evaluation. This cognitive offloading defeats the purpose of human oversight, creating a false sense of accountability while allowing algorithmic errors to propagate unchecked.

Critical design principles to mitigate risks:

- Present AI outputs without confidence scores during initial human review to encourage independent assessment

- Randomize case difficulty so reviewers cannot predict which decisions are genuinely ambiguous versus AI-certain

- Provide clear rubrics and decision frameworks that guide consistent evaluation across reviewers

- Rotate reviewers across different case types to prevent specialization that leads to autopilot behavior

- Implement regular calibration sessions where teams discuss challenging cases and align on standards

Reviewer overload emerges when systems route too many cases to humans or fail to prioritize effectively. If your fraud detection system flags 40% of transactions for review, analysts cannot maintain focus and quality suffers. Balance human-in-the-loop with human-on-the-loop approaches where AI handles most decisions autonomously and humans monitor aggregate performance metrics, intervening only when patterns suggest problems.

Pro Tip: Design feedback loops that capture not just whether the human agreed with the AI, but why they disagreed and what contextual factors influenced their decision. This rich feedback trains models faster and helps identify systematic gaps in the AI’s understanding that simple correction labels miss.

The human-on-the-loop model works well for scaled operations where individual case review becomes impractical. Instead of reviewing every content moderation decision, human moderators monitor dashboards showing approval rates, user appeals, and edge case patterns. When metrics deviate from expected ranges, they investigate and adjust AI parameters or retrain models. This approach maintains oversight while enabling the volume throughput that business objectives require.

Interface design impacts effectiveness significantly. Cluttered review screens with excessive information slow decisions and increase errors. Streamlined interfaces that highlight relevant factors and provide one-click feedback options improve both speed and accuracy. A/B test different layouts and workflows with actual reviewers to optimize the human experience rather than assuming what will work.

Strategic HITL system design requires ongoing refinement as both AI capabilities and business needs evolve. Quarterly reviews of routing rules, confidence thresholds, and reviewer performance ensure the system adapts rather than calcifies around initial assumptions that may no longer hold.

Future outlook and strategic steps for business leaders to adopt human-in-the-loop AI

Starting your HITL journey requires a phased approach that builds capability while managing risk. Begin with a single high-value use case where AI can deliver quick wins but human oversight remains essential. Customer inquiry routing, document classification, or preliminary candidate screening offer manageable scope with clear success metrics. Deploy the system with conservative routing rules that send more cases to humans initially, then gradually increase AI autonomy as confidence and performance data accumulate.

Verified operator networks and specialized APIs accelerate implementation. Rather than building review platforms from scratch, leverage existing infrastructure that connects AI systems with qualified human reviewers. These platforms handle task distribution, quality control, and feedback collection, letting you focus on defining business rules and success criteria rather than engineering workflow systems.

Comparing approaches clarifies strategic choices:

| Approach | Best For | Limitations | Cost Profile |

|---|---|---|---|

| Fully Autonomous AI | High-volume, low-stakes, well-defined tasks | Struggles with edge cases, limited adaptability | Low ongoing, high upfront training |

| Human-in-the-Loop | High-stakes decisions, nuanced judgment, continuous learning needs | Slower than pure automation, requires reviewer management | Moderate ongoing, moderate upfront |

| Human-on-the-Loop | Scaled operations with periodic oversight needs | May miss individual errors, requires robust monitoring | Low ongoing, moderate upfront |

| Pure Human Process | Highly complex, relationship-driven, creative work | Does not scale, expensive, slower | High ongoing, low upfront |

Emerging applications expand HITL relevance beyond traditional domains. Generative AI content creation benefits from human reviewers who assess factual accuracy, brand alignment, and tone before publication. AI-generated code requires developer review to catch security vulnerabilities and ensure maintainability. Personalized marketing campaigns use HITL to validate that algorithmic recommendations align with brand values and avoid unintended messaging.

Regulatory trends increasingly mandate human oversight for consequential automated decisions. Business leaders should prioritize HITL in customer-facing functions and high-stakes operations where errors damage relationships or violate compliance requirements. Financial services, healthcare, employment, and housing decisions face growing scrutiny that makes pure automation legally and reputationally risky.

Integration with existing workflows determines adoption success. Map current decision processes to identify natural insertion points for AI assistance and human review. A claims processing workflow might add AI-powered damage assessment after initial filing but before adjuster assignment. This integration preserves familiar process steps while introducing efficiency gains that staff can recognize and appreciate.

Key implementation steps:

- Define clear success metrics beyond just accuracy, including processing time, cost per decision, and user satisfaction

- Start with shadow mode where AI makes recommendations but humans make all final decisions, building trust and training data

- Establish feedback channels where reviewers can report problems and suggest improvements, creating ownership

- Plan for continuous model retraining using human feedback to improve AI performance over time

- Document decision criteria and edge cases to build institutional knowledge that survives staff turnover

Pro Tip: Focus measurement on business outcomes rather than just AI accuracy metrics. A model with 85% accuracy that reduces processing time by 60% and cuts costs by 40% delivers more value than a 95% accurate model that only marginally improves efficiency.

The future of work involves humans and AI collaborating rather than competing. HITL architectures represent this partnership model, letting each party contribute their strengths. As AI capabilities advance, the specific tasks requiring human judgment will shift, but the fundamental need for human oversight in complex, high-stakes, and relationship-driven contexts will persist. Organizations that build HITL competency now position themselves to adapt as technology evolves rather than facing disruptive replacement cycles.

Explore AI-driven efficiency solutions for your business

Implementing human-in-the-loop AI transforms how your organization balances automation with accountability. The insights shared here provide a foundation, but translating concepts into operational systems requires strategic planning and technical expertise. Whether you are exploring initial AI adoption or refining existing implementations, the right approach considers your specific industry context, risk tolerance, and growth objectives. Discover how generative search optimization steps can enhance your customer engagement while maintaining the quality controls that HITL principles emphasize. Explore our generative search optimization process guide for frameworks that integrate AI capabilities with human judgment. Review the best generative search tools that support hybrid workflows, enabling your team to leverage AI efficiency without sacrificing oversight.

FAQ

What are common use cases for human-in-the-loop AI in business?

HITL AI excels in high-stakes decision-making like loan approvals, medical diagnostics, and fraud detection where errors carry significant consequences. Exception handling scenarios such as customer service escalations and content moderation benefit from human judgment on edge cases. Customer-facing interactions including personalized recommendations and complex support inquiries maintain quality through strategic human touchpoints.

How does human-in-the-loop AI improve decision accuracy?

Human feedback corrects AI errors in real-time, preventing mistakes from impacting customers or operations. This correction data feeds back into training pipelines, teaching models to recognize patterns they previously missed. Continuous learning cycles compound improvements over time, with each human intervention making the AI more capable and reducing future review needs.

What risks should businesses watch for when implementing human-in-the-loop AI?

Automation bias causes reviewers to defer to AI recommendations without applying critical judgment, undermining the value of human oversight. Cognitive offloading occurs when humans become passive monitors rather than active decision-makers, creating accountability gaps. Proper system design with clear rubrics, randomized case difficulty, and regular calibration sessions mitigates these risks while maintaining the efficiency gains that justify HITL investment.

Recommended

- Why transparency in AI matters for business leaders in 2026 | Artificial Intelligence

- Why ethical AI matters: benefits for business in 2026 | Artificial Intelligence

- Why AI is essential for entrepreneurs in 2026 | Artificial Intelligence

- Why AI consulting matters: strategic business value | Artificial Intelligence

Recent Comments