You’ve deployed an AI solution, but now you face the real challenge: proving it works. Business leaders, educational administrators, and government officials constantly wrestle with quantifying AI’s true effect on operations and engagement. Without clear measurement frameworks, even the most promising AI initiatives become black boxes, making it impossible to justify investments or scale successful programs. This guide walks you through a practical, step-by-step approach to measure AI impact accurately, helping you move from uncertainty to data-driven confidence in your AI strategy.

Table of Contents

- Key takeaways

- Understanding the problem: Why measuring AI impact matters

- Preparing to measure AI impact: Setting up your approach

- Executing AI impact measurement: Data collection and evaluation methods

- Verifying and optimizing AI impact: Monitoring and continuous improvement

- Explore AI tools and training for enhanced impact

- Frequently asked questions about measuring AI impact

Key Takeaways

| Point | Details |

|---|---|

| Metrics and stakeholder alignment | Establish clear measurement goals with buy in from key stakeholders to ensure everyone understands what success looks like. |

| Data collection and baselines | Gather relevant data early and establish baseline metrics so you can quantify change over time. |

| Structured evaluation frameworks | Use a structured framework to map AI activities to concrete outcomes and avoid confusing activity metrics with impact. |

| Quality and attribution challenges | Anticipate data quality issues and attribution gaps and implement processes to improve data reliability and causal links. |

| Ongoing monitoring for improvement | Set up continuous monitoring and regular reviews to refine models and sustain AI performance gains. |

Understanding the problem: Why measuring AI impact matters

Measuring AI impact isn’t just about proving ROI. It fundamentally shapes how you allocate resources, justify budgets, and scale solutions across your organization. Many organizations struggle with defining meaningful AI impact metrics, leading to inconclusive evaluations that leave decision-makers skeptical about continued investment. When you can’t demonstrate concrete results, even transformative AI projects risk getting cut during budget reviews.

The stakes vary by sector, but the challenge remains universal. Business leaders need to show efficiency gains and cost reductions. Educational administrators must prove engagement improvements and learning outcomes. Government officials face accountability pressures to demonstrate taxpayer value. Yet most organizations share common barriers that prevent effective measurement:

- Unclear or overly broad goals that resist quantification

- Poor data collection practices before AI implementation

- Lack of baseline metrics for comparison

- Confusion between AI activity metrics and actual impact

- Unrealistic expectations about measurement timelines

These obstacles create a dangerous misconception: that AI works automatically without tracking. This mindset treats AI as a magic solution rather than a tool requiring careful evaluation and refinement. The reality is far different. AI systems need continuous measurement to identify what works, what doesn’t, and where adjustments deliver the greatest value.

“Without measurement, AI becomes an act of faith rather than a strategic investment. Leaders who embrace rigorous impact assessment gain the insights needed to maximize returns and scale intelligently.”

Different sectors require tailored impact metrics. Manufacturing operations focus on throughput and defect rates. Educational institutions track student engagement and completion rates. Government agencies measure service delivery speed and citizen satisfaction. The common thread is specificity. Vague metrics like “improved efficiency” mean nothing without concrete numbers attached to clear processes.

Pro Tip: Start your measurement planning by asking what decision you’ll make with the data. If the answer is unclear, refine your metrics until they directly inform actionable choices about scaling, modifying, or discontinuing your AI initiative.

Preparing to measure AI impact: Setting up your approach

Successful measurement begins long before you analyze results. The preparation phase determines whether your evaluation produces actionable insights or wasted effort. Successful AI impact measurement begins with clearly defined business objectives and aligned KPIs that everyone understands and supports.

Start by defining specific, measurable goals for your AI solution. Avoid broad statements like “improve operations” or “increase engagement.” Instead, target precise outcomes: reduce customer service response time by 40%, increase student assignment completion by 25%, or decrease permit processing time by 30%. Specific goals create clear targets for measurement and make success obvious to stakeholders.

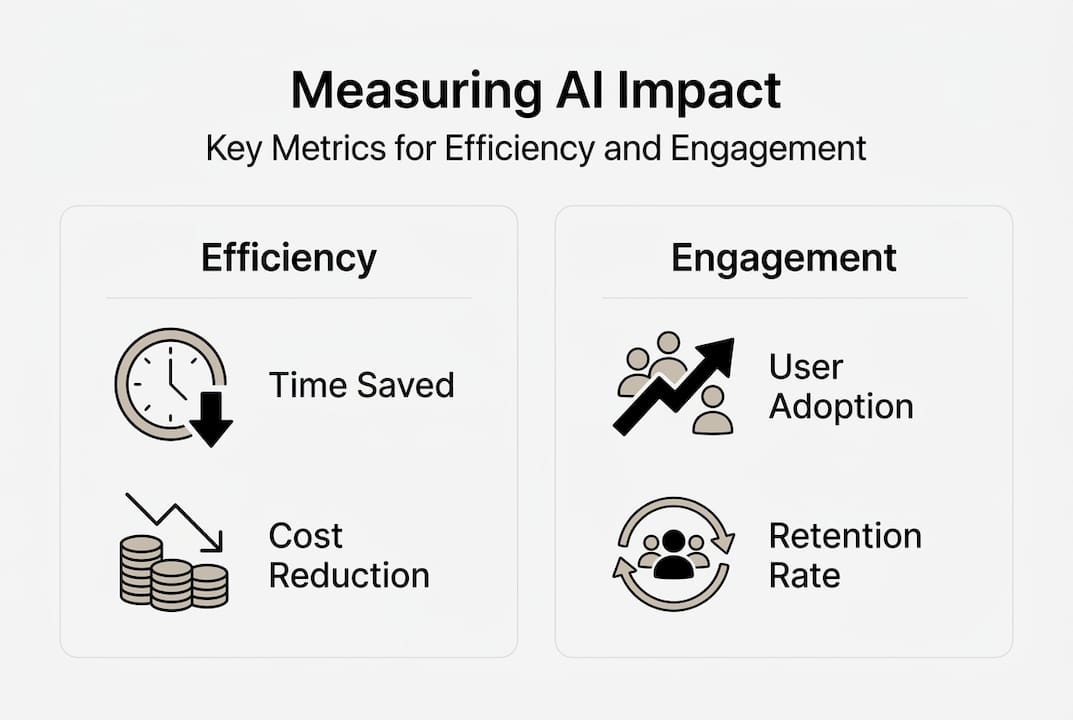

Next, identify KPIs that directly reflect your goals. Choose metrics you can measure consistently before and after AI implementation. For efficiency goals, consider time savings, cost reductions, error rates, or throughput increases. For engagement objectives, track interaction frequency, session duration, completion rates, or satisfaction scores. The key is selecting metrics that capture real impact rather than vanity numbers.

Follow these preparation steps systematically:

- Document your current state with comprehensive baseline data collection across all relevant metrics for at least one full business cycle

- Map stakeholder expectations by interviewing key decision-makers to understand what success looks like from each perspective

- Design your measurement framework specifying data sources, collection methods, analysis intervals, and reporting formats

- Establish data governance protocols ensuring consistent collection, storage, and access throughout the measurement period

- Create comparison scenarios defining control groups or phased rollouts that isolate AI impact from other variables

- Set realistic timelines recognizing that meaningful impact often takes months to materialize and measure accurately

Baseline data collection deserves special attention. You need comprehensive before pictures to make valid after comparisons. Collect data for at least one complete business cycle to account for seasonal variations and normal fluctuations. Include both quantitative metrics and qualitative feedback to capture the full impact picture.

Stakeholder alignment prevents measurement disputes later. When executives, managers, and end users agree upfront on success criteria, you avoid debates about whether results matter. Schedule alignment sessions where stakeholders review and approve the measurement framework before implementation begins.

Pro Tip: Document your measurement framework in a single accessible document that specifies exactly what you’ll measure, how, when, and why. This reference guide keeps everyone aligned and provides a roadmap for your evaluation team throughout the measurement period.

Executing AI impact measurement: Data collection and evaluation methods

With preparation complete, execution focuses on systematic data collection and rigorous analysis. This phase transforms your measurement framework from plan to practice, generating the evidence needed to quantify AI impact accurately.

Effective measurement requires both quantitative and qualitative data. Quantitative metrics provide hard numbers: time saved, costs reduced, volume processed, errors eliminated. Qualitative data captures user experience, satisfaction changes, and unexpected benefits or challenges that numbers alone miss. Combine both types for a complete impact picture.

Control groups or phased rollouts strengthen your analysis by isolating AI impact from other factors. If possible, maintain a comparison group using traditional methods while testing AI with another group. This approach clearly attributes improvements to AI rather than general trends, training effects, or seasonal changes. When full control groups aren’t feasible, phased rollouts let you compare early adopters against later groups.

A structured evaluation framework and reliable data sources are vital to accurately measuring AI impact. Choose frameworks that match your sector and goals:

- ROI analysis calculates financial return by comparing AI costs against measurable benefits like labor savings, revenue increases, or cost reductions

- User engagement metrics track interaction patterns, feature adoption, session frequency, and satisfaction scores to measure engagement improvements

- Workflow efficiency measures compare process completion times, steps required, error rates, and throughput before and after AI implementation

- Outcome quality assessments evaluate end results like customer satisfaction, learning outcomes, or service delivery quality beyond pure efficiency

| Evaluation Method | Best For | Key Metrics | Timeline |

|---|---|---|---|

| ROI Analysis | Cost justification and budget decisions | Cost savings, revenue impact, efficiency gains | 6-12 months |

| Engagement Tracking | User adoption and satisfaction | Active users, session duration, feature usage | 3-6 months |

| Efficiency Measurement | Process improvement validation | Time savings, throughput, error reduction | 3-6 months |

| Quality Assessment | Outcome improvement verification | Satisfaction scores, completion rates, accuracy | 6-12 months |

Compare KPIs over defined intervals to assess impact trends. Monthly comparisons reveal short-term patterns while quarterly reviews show sustained improvements. Look for consistent trends rather than single data points. One exceptional month might reflect seasonal factors rather than AI impact.

Avoid common data pitfalls that undermine measurement validity. Data bias occurs when collection methods favor certain outcomes or exclude relevant populations. Incomplete datasets miss critical context needed for accurate interpretation. Selection bias happens when comparison groups differ in ways beyond AI adoption. Address these issues through careful sampling, comprehensive collection, and transparent methodology.

Pro Tip: Create AI-powered content checklists for your data collection process to ensure consistency across teams and time periods. Standardized checklists prevent gaps and maintain data quality throughout extended measurement periods.

Verifying and optimizing AI impact: Monitoring and continuous improvement

Raw data tells only part of the story. Verification requires interpreting results to understand not just what changed, but why. This deeper analysis reveals root causes and optimization opportunities that surface-level metrics miss.

Look beyond headline numbers to detect underlying patterns. A 30% efficiency gain sounds impressive until you discover it only applies to simple cases while complex scenarios show no improvement. Dig into segmented data to identify where AI delivers value and where it falls short. This granular understanding guides targeted improvements.

Common measurement challenges complicate interpretation and require careful handling:

- Attribution errors occur when you credit AI for improvements caused by other factors like better training, process changes, or external trends

- Changing conditions make comparisons difficult when market dynamics, regulations, or organizational priorities shift during measurement

- Data drift happens when input characteristics change over time, affecting AI performance in ways that skew impact metrics

- Lag effects delay visible impact, causing premature conclusions before AI benefits fully materialize

Continuous monitoring and iterative optimization are key to sustaining AI performance and value. Implement dashboards that track KPIs in real time, alerting you to performance degradation before it becomes critical. Automated monitoring catches issues early when corrections cost less and cause minimal disruption.

Compare different AI approaches when possible to identify best practices. If you deployed multiple AI solutions or configurations, analyze which delivers superior results. This comparative analysis reveals optimization opportunities and informs future AI investments. Even small configuration changes can produce significant impact differences.

Schedule regular review cycles to assess AI performance and adjust strategies. Monthly operational reviews catch tactical issues while quarterly strategic reviews evaluate whether AI continues serving organizational goals. Annual comprehensive assessments determine if AI investments warrant continuation, expansion, or redirection.

| Review Type | Frequency | Focus Areas | Key Questions |

|---|---|---|---|

| Operational | Monthly | Performance metrics, user feedback, technical issues | Is AI performing as expected? What quick fixes improve results? |

| Strategic | Quarterly | Goal alignment, ROI trends, scaling opportunities | Does AI still serve our objectives? Where should we expand or adjust? |

| Comprehensive | Annually | Total impact, competitive positioning, future direction | Should we continue, expand, modify, or discontinue this AI investment? |

Refine KPIs as your understanding deepens. Initial metrics might miss important dimensions of impact that become apparent through use. Add new measures that capture emerging value while retiring metrics that prove uninformative. This evolution keeps measurement relevant as AI matures.

Pro Tip: Build a feedback loop connecting measurement insights directly to AI development teams. When impact data reveals performance gaps or optimization opportunities, ensure technical teams receive actionable intelligence to drive improvements. This connection transforms measurement from reporting exercise to continuous improvement engine.

Explore AI tools and training for enhanced impact

Measuring AI impact effectively requires both strategic frameworks and practical expertise. The right resources help you move from theory to implementation, ensuring your measurement efforts produce actionable insights rather than just reports.

Our AI strategy guide for SMEs provides comprehensive frameworks for planning and executing AI initiatives with built-in measurement approaches. These resources help you avoid common pitfalls and accelerate your path to measurable results.

Building internal capability strengthens your long-term measurement success. AI awareness training equips your teams with the knowledge needed to collect quality data, interpret results accurately, and act on insights effectively. When stakeholders understand AI fundamentals, they contribute more meaningfully to measurement design and implementation.

Negotiating AI investments requires demonstrating clear value propositions backed by solid impact data. Our AI salary negotiation guide extends beyond compensation to help you articulate AI value in terms that resonate with decision-makers, strengthening your ability to secure resources for measurement initiatives.

Frequently asked questions about measuring AI impact

How do I choose the best KPIs for my AI project?

Select KPIs that directly connect to your specific business objectives and can be measured consistently before and after AI implementation. Focus on metrics that inform decisions rather than vanity numbers. For efficiency goals, prioritize time savings and cost reductions. For engagement objectives, track interaction frequency and satisfaction scores.

What are effective methods to measure user engagement improvements?

Track behavioral metrics like session duration, feature adoption rates, return frequency, and task completion percentages. Combine these quantitative measures with qualitative feedback through surveys and user interviews. Compare engagement patterns between AI users and control groups to isolate AI’s specific contribution to engagement changes.

How can I ensure data quality for accurate AI impact assessment?

Establish standardized collection protocols, train data collectors thoroughly, and implement validation checks at collection points. Use automated tools to flag anomalies and inconsistencies. Maintain comprehensive documentation of data sources, collection methods, and any changes to procedures. Regular AI penetration testing helps identify vulnerabilities that could compromise data integrity.

What are common pitfalls to avoid when analyzing AI ROI?

Avoid attributing all improvements to AI without accounting for other factors like training, process changes, or market trends. Don’t ignore implementation and maintenance costs when calculating returns. Resist cherry-picking favorable time periods or user segments. Include both direct financial benefits and indirect value like improved satisfaction or reduced risk for comprehensive ROI assessment.

How often should AI impact measurement be updated?

Conduct operational reviews monthly to catch performance issues early. Perform strategic assessments quarterly to evaluate goal alignment and identify optimization opportunities. Complete comprehensive annual reviews to determine overall AI value and inform investment decisions. Adjust frequency based on AI maturity, with newer implementations requiring more frequent monitoring than established systems.

Recommended

- AI Strategy Guide for SMEs: Boost Efficiency Step-by-Step | Artificial Intelligence

- AI Training | Artificial Intelligence

- Employee-Engagement-for-HR | Artificial Intelligence

- How AI boosts efficiency and engagement in small business | Artificial Intelligence

- Master AI-powered content checklists for 2026 success | Rule27 Design

- Adobe Mix Modeler Cuts Insight Time by 90% with AI | Trackingplan

Recent Comments